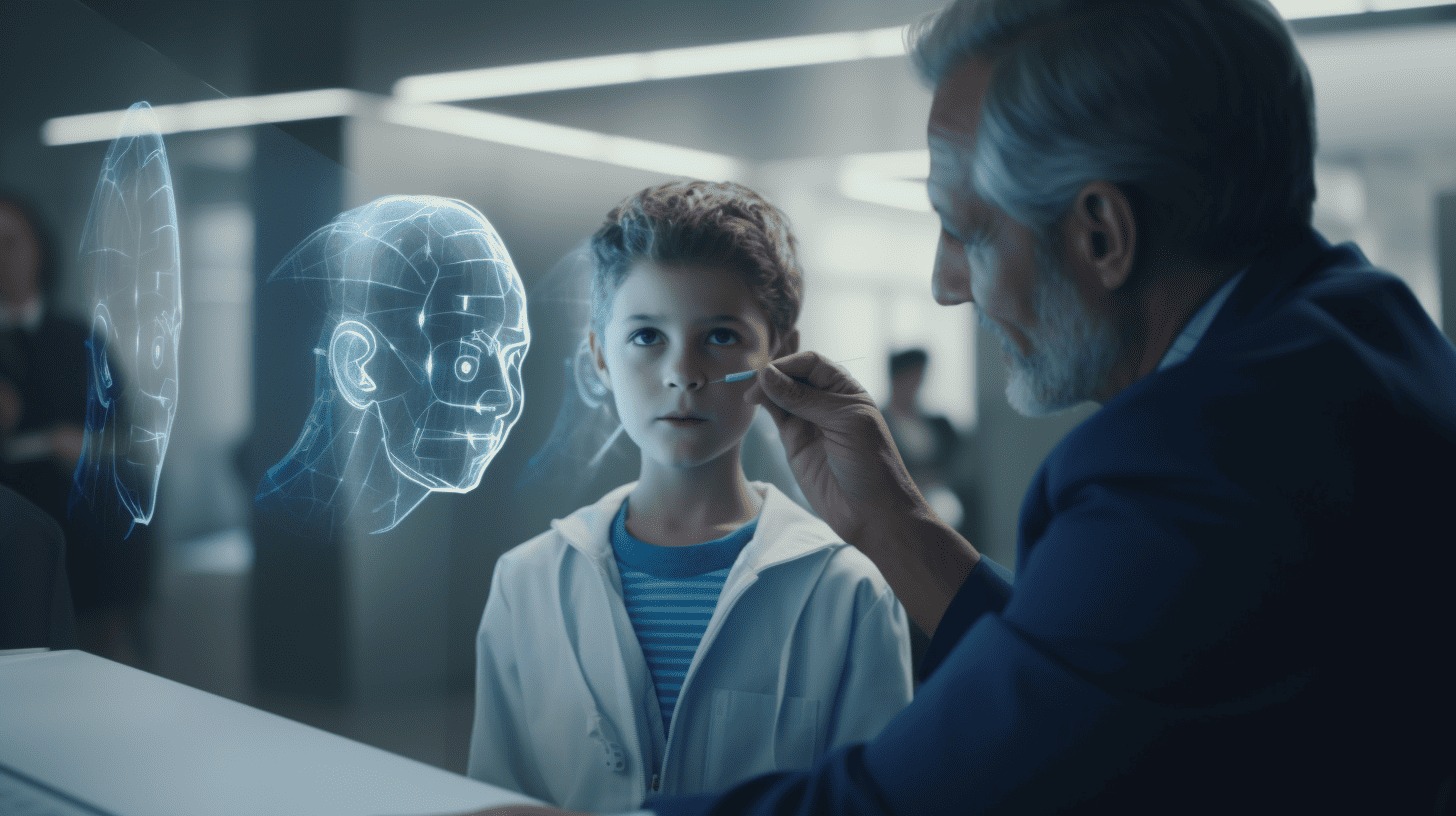

Last June in an open letter, a number of American mathematicians issued a plea not to cooperate with the police. Among other things, they criticized predictive policing and facial recognition, as the data used to train AI systems is often biased. This can have discriminatory repercussions for minority groups, such as those in the black community. The initiators emphasize that, as mathematicians, they would in effect be adding a ‘scientific’ cachet to these policing practices, whereas a scientific basis is in actual fact lacking. “It is simply too easy to create a ‘scientific’ veneer for racism,” they wrote. The letter reads as a plea for ethics in AI and clearly points out that AI technologies, although rooted in mathematical models, are by no means ‘neutral’.

Following the Second World War back in the 1940s, the American mathematician Norbert Wiener had already alerted engineers and academics to their moral responsibility, especially when it came to collaborations for military purposes. The Manhattan Project made it abundantly clear to Wiener how drastic the consequences of this could be. It was for this reason that he refused to enter into these kinds of collaborations.

It intrigues me that we still so readily put legal questions (is it legal?) ahead of moral ones (is it ethical?). For example, at Google, Project Maven, a partnership with the Pentagon for military applications of machine learning, only fell apart once Google’s employees objected to it.

Moral responsibility is more arbitrary

Obviously, legal liability is clearer: you can delve into existing legislation and see if it is being violated. Moral responsibility is much more arbitrary and also easier to circumvent. At least until your conscience gets in the way. But we are also creative in this respect: we set all manner of mechanisms in motion to distance ourselves from our moral responsibility. This is called moral disengagement. Well-known examples are found in the use of language. A CEO who has to lay off a large number of employees can justify their actions by stating that this is how they will ”save the company.” And thereby shift the focus away from human suffering.

Numerous philosophers have already explored the relationship between ethics and responsibility. Philosopher Hannah Arendt defined morality as the inner dialogue that you have with yourself. In her view, morality is invariably synonymous with taking individual responsibility. She underlines the importance of maintaining a dialogue with yourself, as well as thinking and judging for yourself. This is the only way to become someone, as in a moral individual. To Arendt, mindlessness and slavishly following the other are the source of evil. Being able to think and judge also entails subjecting yourself to critical self-analysis and immersing yourself in the deepest and darkest recesses of your inner being.

Rather than revising or expanding legislation, we could perhaps benefit more from having more inner dialogues. Along with an alarm that ideally would go off when we resort to fallacy and moral disengagement when engaging in critical self-analysis. Does an algorithm or app already exist for this?

About this column

In a weekly column, alternately written by Buster Franken, Eveline van Zeeland, Jan Wouters, Katleen Gabriels, Mary Fiers, Tessie Hartjes, and Auke Hoekstra, Innovation Origins tries to find out what the future will look like. These columnists, occasionally supplemented with guest bloggers, are all working in their own way on solutions for the problems of our time. So tomorrow will be good. Here are all the previous articles in this series.