How will robots change the world? A frequently asked and as yet unanswered question. After all, we do not have a crystal ball. What we do know is that digitalization and automation have changed the world enormously in recent decades. At Eindhoven University of Technology (TU/e) in the Netherlands, the potential of smart machines in industry and daily life is being researched each and every day. Scientists immerse themselves in technology and student teams get to work on concrete solutions to social problems. This series will tell you about the latest robots, their background, and their future. The sixth episode today: surgical robots. Today, the eighth episode: Autonomous-driving robots.

Self-driving vehicles with a predetermined task and a limited speed, such as garbage trucks, could make their entrance into society in the years to come. The technology is developed far enough for that, according to Gijs Dubbelman, head of the Mobile Perception System Lab at the Eindhoven University of Technology (TU/e, the Netherlands). He doesn’t see a self-driving car riding around the inner city of Amsterdam for some time yet. “The artificial intelligence doesn’t have enough traffic insight for that yet, it’s just not powerful enough”, Dubbelman clarifies.

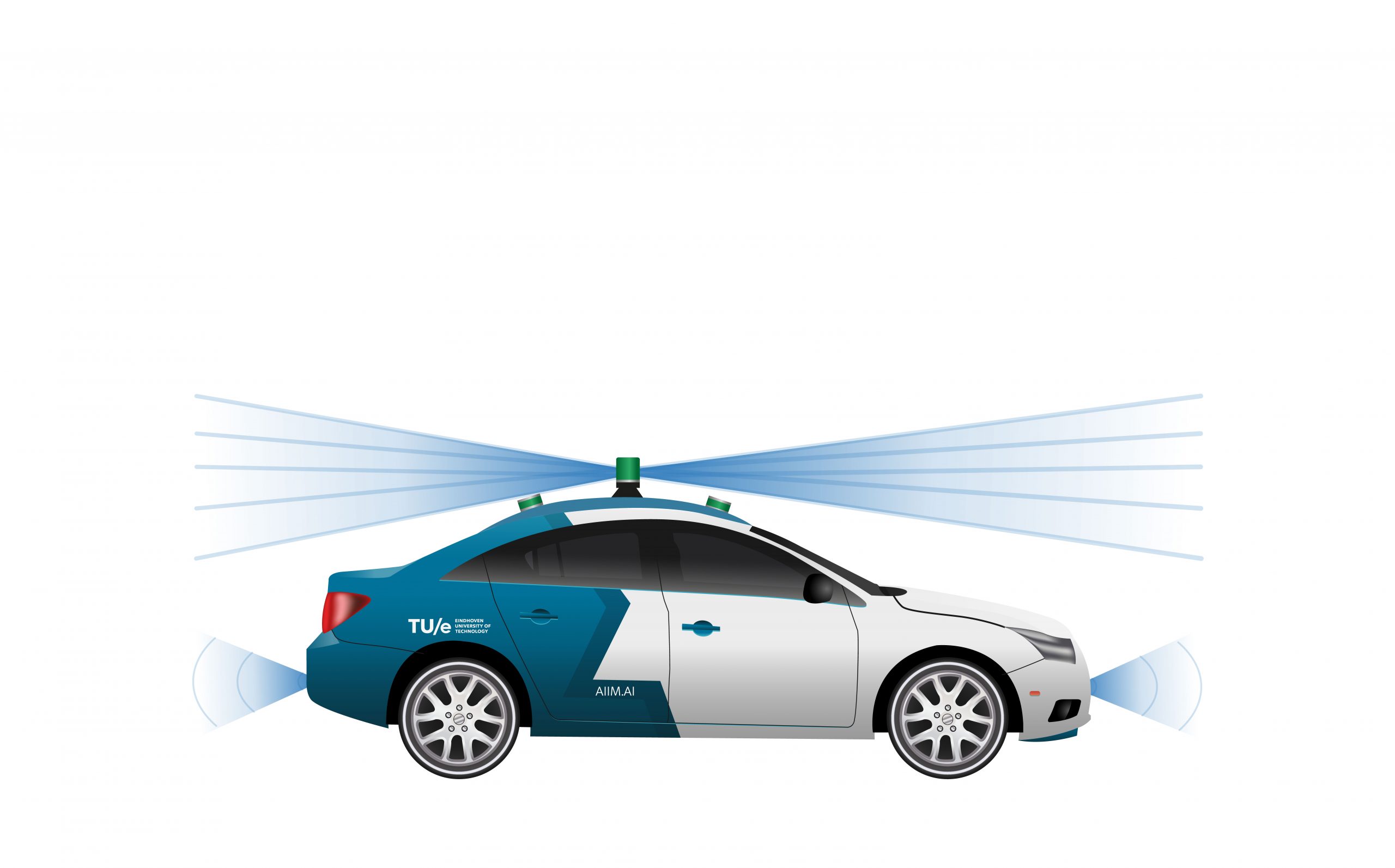

How do mobile robots perceive their surroundings? This is what scientists in the Mobile Perception System Lab are researching. “We take data from sensors and cameras and use artificial intelligence to make sure that the robot gets as complete a view of the world as possible,” says Dubbelman. Through that digital worldview, the robot knows where it is and what is going on around it. “We make the robot’s brain,” he says. Dubbelman is fully focused on developing artificial intelligence that enables the robot to build up its digital worldview. The robot can also make decisions based on that worldview. This is something that other labs within the university are working on.

Anticipate

“The eyes of a robot, the sensors, are much better than those of a human,” says Dubbelman. Sensors, cameras and radars see everything. Whereas people can make an estimate of the distance to a certain object, a radar can do that with an accuracy down to the last centimeter. The challenge for the researchers is to extract enough information from the sensors that cannot be measured straightaway. For instance, it is difficult to gauge whether a pedestrian is going to cross a road, but it is possible to estimate this using artificial intelligence. All this data contributes to a worldview that is as complete as possible.

“We want a robot, just like human drivers, to be able to predict what will happen in the short term. So they can anticipate traffic,” explains Dubbelman. As humans, we are constantly scanning our surroundings. If a cyclist moves a little to the left, we can already estimate that this cyclist might turn left soon. We will then take this into account by paying more attention and driving a little slower. Dubbelman: “At present, the robots’ worldview is not yet complete enough to be able to do this.”

Recognize

“”In order to be able to anticipate events, we are currently developing an algorithm that can distinguish not only an object but its components as well,” he continues. The algorithm can now recognize parts of e.g.a person or a car. “If we know what a head is and what feet are, we can then complete this worldview,” says Dubbelman. The system can eventually also use this information to make decisions. “When the car can recognize a face, for instance, then it can tell if pedestrians are looking at the car. If they dare doing that, then they’re less likely to cross the road,” he goes on. “This way, the system can also recognize whether there is an adult or a child on the curb. A child is more likely to just cross, so the car is able to anticipate that.”

Self-learning cars

The researchers are developing self-learning systems in order to do this in the future. “At the moment, the algorithm learns from human-labelled data. People indicate, for example, what a bicycle is and what a tree is,” he explains. “We are in the process of developing a self-learning algorithm.” Over the past few months, the researchers have succeeded in creating an algorithm that has learnt how to convert a simple picture into a digital worldview.

At the moment, the algorithm can only work with simple line drawings, although this is constantly being expanded. “The system no longer learns from data labeled by humans, but is now self-learning. This allows it to analyze increasingly more intricate and detailed images, which in turn leads to a more complete worldview,” says Dubbelman. A system that learns by itself is much more robust and can cope better with changes in the world around it. “This is important because the world around us is constantly changing,” he notes.

Attentive driver

The learning process of the algorithm is comparable to that of a child. “We have been riding in cars with our parents since childhood. We gain insights into how the world works that way,” Dubbelman observes. He draws a lot of inspiration from children’s learning process. “Nevertheless, AI is not nearly as powerful as our own brains.” The researcher hopes that he can develop the autonomous car to such a level that the algorithm is as good as an experienced, attentive driver. “Then we will be able to prevent a lot of accidents.” One major advantage that autonomous cars have which people do not have? “Cars and infrastructure will be able to communicate with each other in the future. As a result, far fewer unanticipated things will happen and probably fewer accidents as a consequence,” Dubbelman claims.

Talent needed

The scientists will further develop the self-learning aspects of the autonomous car over the coming period. A lot of money is required for this sorely needed breakthrough in research. “In America, large companies such as Google, Facebook, and Uber are engaged in this kind of fundamental research. In China, the government and the business community are also investing heavily in this area. That should be done a bit more in Europe too,” Dubbelman believes. But money is not everything. “You also need talent. Europe is a great place to live and work, so that is an advantage,” he points out. “The government should develop a solid ecosystem for talent and investors.”

Dubbelman is positive about the legislation concerning autonomous driving. “If a computer has to make decisions about life and death, there ought to be clear rules for that. Proper testing procedures must also be drawn up for this purpose,” he says. “The Dutch Road Transport Agency (RDW) is already working on this for autonomous vehicles. The frameworks are not yet ready, but neither is the technology.” In his view, this must all be developed at the same time. “There has got to be a balance between safety and efficiency. Driving at high speeds is fast, but it’s not safe enough yet, and vice versa,” says the researcher. “That’s why I think we will first see autonomous vehicles in applications where speed is less important. For example, in parcel delivery vehicles and garbage trucks. If we can advance the algorithm further so that it becomes an experienced driver, then an autonomous passenger car in busy urban traffic will also be possible.”

Are you curious about the other remarkable robots at the TU/e High Tech System Center? Read the previous episodes here.