The medical field is on the brink of a transformative shift with the integration of artificial intelligence (AI), but the journey is not without its obstacles. Over 500 AI tools have been cleared by the US FDA, however, their adoption by physicians remains limited due to scepticism surrounding their effectiveness. While AI has demonstrated potential in tasks ranging from diagnostic tools to predicting tumour responses, the performance of these tools falls short of human clinicians.

- AI shows potential in healthcare, from increasing clinician efficiency to aiding diagnosis, but scepticism limits adoption.

- Current legal regulations for medical AI in Europe are insufficient, prompting the development of the Proposal of AI Act.

- Despite some promising AI programs, the integration of AI into healthcare faces significant hurdles such as local adaptation.

AI and clinical tasks

The application of AI in healthcare extends beyond machine-learning diagnostic tools. For instance, the healthtech firm Kry has integrated AI into its services, leading to a 20% surge in clinician efficiency. The AI is designed to handle non-medical administrative tasks such as scheduling appointments and looking up addresses, tasks which Kry’s COO, Kalle Conneryd-Lundgren believes do not add value to patient care. By taking over these tasks, the AI frees up the equivalent of 10,000 additional patient appointments per month, enabling clinicians to engage more with patients.

It’s not a replacement for clinicians but a tool to eliminate unnecessary tasks and increase the time spent with patients. The ultimate goal is to provide a more personalised care. However, for AI to have a more significant role in healthcare, a solid legal framework is needed.

Regulating AI in healthcare

Currently, there is no specific legal regulation of medical AI in Europe, but it is subject to international human rights conventions and EU regulations. The GDPR and the European Convention on Human Rights guarantee the right to protection of personal data and protect against discrimination and the right to privacy, all of which are applicable to medical AI.

However, this is not enough. The EU is developing the Proposal of AI Act, which would classify AI systems according to risk and establish obligations for suppliers and users. Additionally, the Council of Europe is working on a legally binding international instrument on AI and fundamental rights. The aim is to defragment the normative landscape of medical AI in Europe, beyond the borders of the EU and its 27 Member States.

AI in patient care: a double-edged sword?

Even with the FDA’s approval for so many AI programs, there has been reluctance among doctors to incorporate them into patient care. They have expressed concerns about the effectiveness of these AI tools and the lack of solid research supporting them. Despite President Biden’s executive order calling for more funding for AI research in medicine, doctors are still skeptical. As Dr. Jesse Ehrenfeld, President of the American Medical Association, puts it, “if physicians are going to incorporate these things into their workflow… we’re going to have to have some confidence that these tools work”.

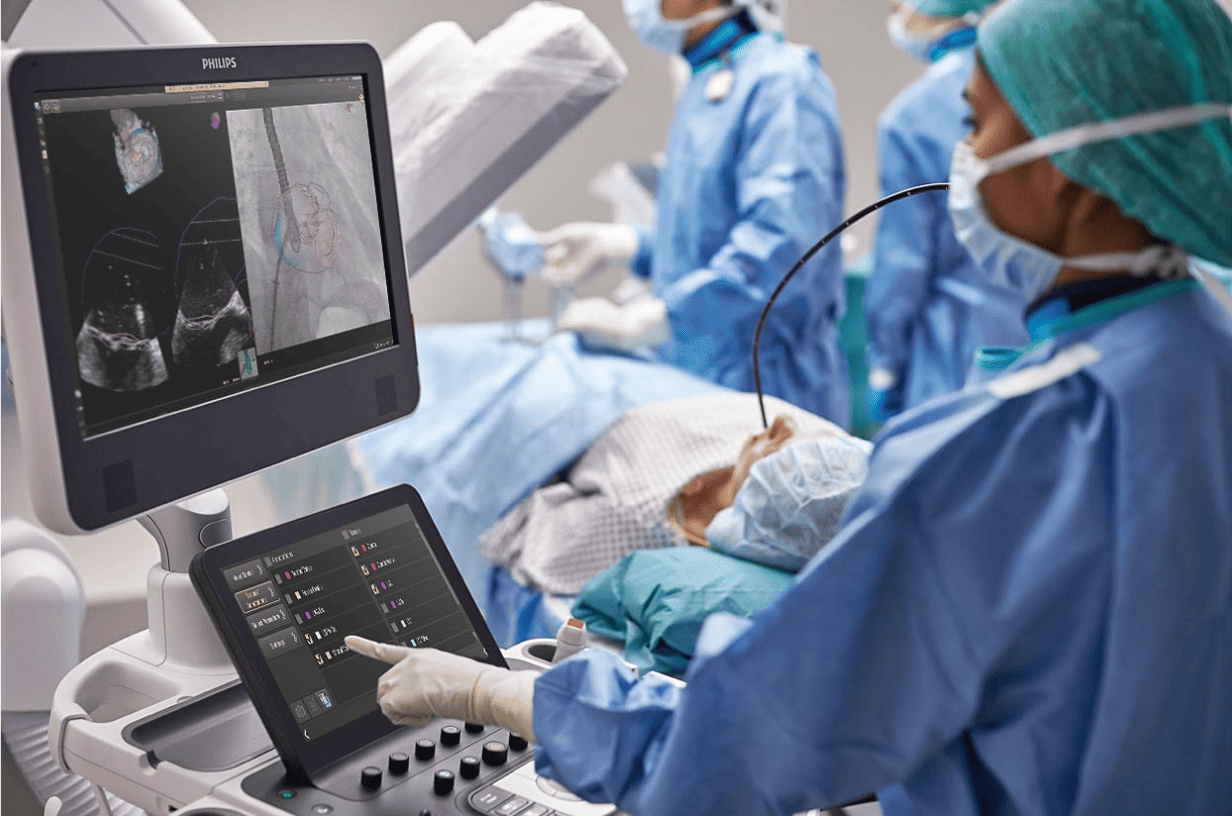

However, it’s not all doom and gloom for AI in healthcare. Some AI programs have shown promising results. For instance, the AI software ‘Mia’, developed for breast screening, was able to detect abnormalities that might have been missed with current screening procedures. Similarly, Philips has developed the ePatch, a wireless device for diagnosing cardiac arrhythmia, which could lead to early detection of heart defects and shorten healthcare queues.

Conquering AI challenges

Despite these promising developments, AI technological progress in healthcare faces significant hurdles. It’s important to ensure that AI programs are tailored to local conditions and technology. The performance of AI tools like ‘Mia’ improved significantly when adjusted for local conditions. It’s also crucial to ensure that AI tools deliver on their promises after clearance, a challenge acknowledged by the FDA.

Moreover, the integration of AI into healthcare requires a multidisciplinary approach. Erasmus MC and TU Delft have opened the first AI ethics lab for healthcare, aiming for ethically sound and clinically relevant AI that benefits both healthcare and healthcare workers[7]. This initiative underscores the importance of collaboration between physicians, engineers, nurses, data scientists, and ethicists in addressing the challenges surrounding the safe testing and integration of AI in patient care.