Artificial general intelligence (AGI) has sparked debate among AI experts, who are sceptical about algorithms like GPT-4 surpassing human intelligence anytime soon. Despite GPT-4’s ability to pass standardised tests, such as the bar exam, it struggles with simple tasks, raising questions about its sentience.

While there’s no concrete definition of AGI, experts propose various tests, such as Apple cofounder Steve Wozniak’s coffee-making challenge or functioning in various jobs like Nils John Nilsson. OpenAI CEO Sam Altman vaguely defines AGI as anything “generally smarter than humans.” In robotics, the lack of data hampers progress, with robots only able to perform narrowly defined tasks. As discussions around sentient AI continue, experts like Suresh Venkatasubramanian warn against overlooking real-world harms that have already manifested.

What Defines AGI?

One of the first challenges in discussing AGI is the lack of a universally accepted definition. While some researchers, such as University of Montreal professor Yoshua Bengio, believe that building an AI with human-level intelligence is possible due to the brain being a machine that just needs deciphering, others like Jerome Pesenti, head of AI at Facebook, express scepticism about the term and its meaning. Critics argue that current AI systems can only perform single tasks well and that the belief in AGI often involves abandoning rational thought. Proponents, on the other hand, see AGI as the ultimate goal of AI research and believe it could help solve complex global challenges, such as climate change and public health crises.

Various tests have been proposed to determine whether an AI system possesses human-level intelligence. Steve Wozniak’s coffee-making challenge focuses on a machine’s ability to interact with the physical world and carry out varied tasks. Nils John Nilsson proposed a test where an AI system could function as an accountant, a construction worker, or a marriage counselor. However, these tests do not provide a precise definition of AGI, leaving the field open to debate and disagreement.

Brain-Inspired AI: A Path to AGI?

AGI researchers often draw inspiration from the human brain, seeking to replicate its principles in intelligent machines. Some current progress in brain-inspired AI focuses on human intelligence characteristics like scaling (capacity to handle increased complexity), multimodality (integration of information from various sources), and reasoning (logical thinking and problem-solving). In-context learning (ability to learn from surrounding context) and prompt tuning (adapting models to perform specific tasks) are considered vital technologies in achieving AGI in AI systems.

Despite these developments, AGI scepticism remains, with some experts doubting the feasibility of creating machines with human-level intellect. Machine consciousness, a potential characteristic of AGI, is also mentioned as a debated concept within the AI community. The lack of a clear definition for AGI and the challenges in replicating human intelligence in machines continue to fuel debate over the possibility of AGI becoming a reality.

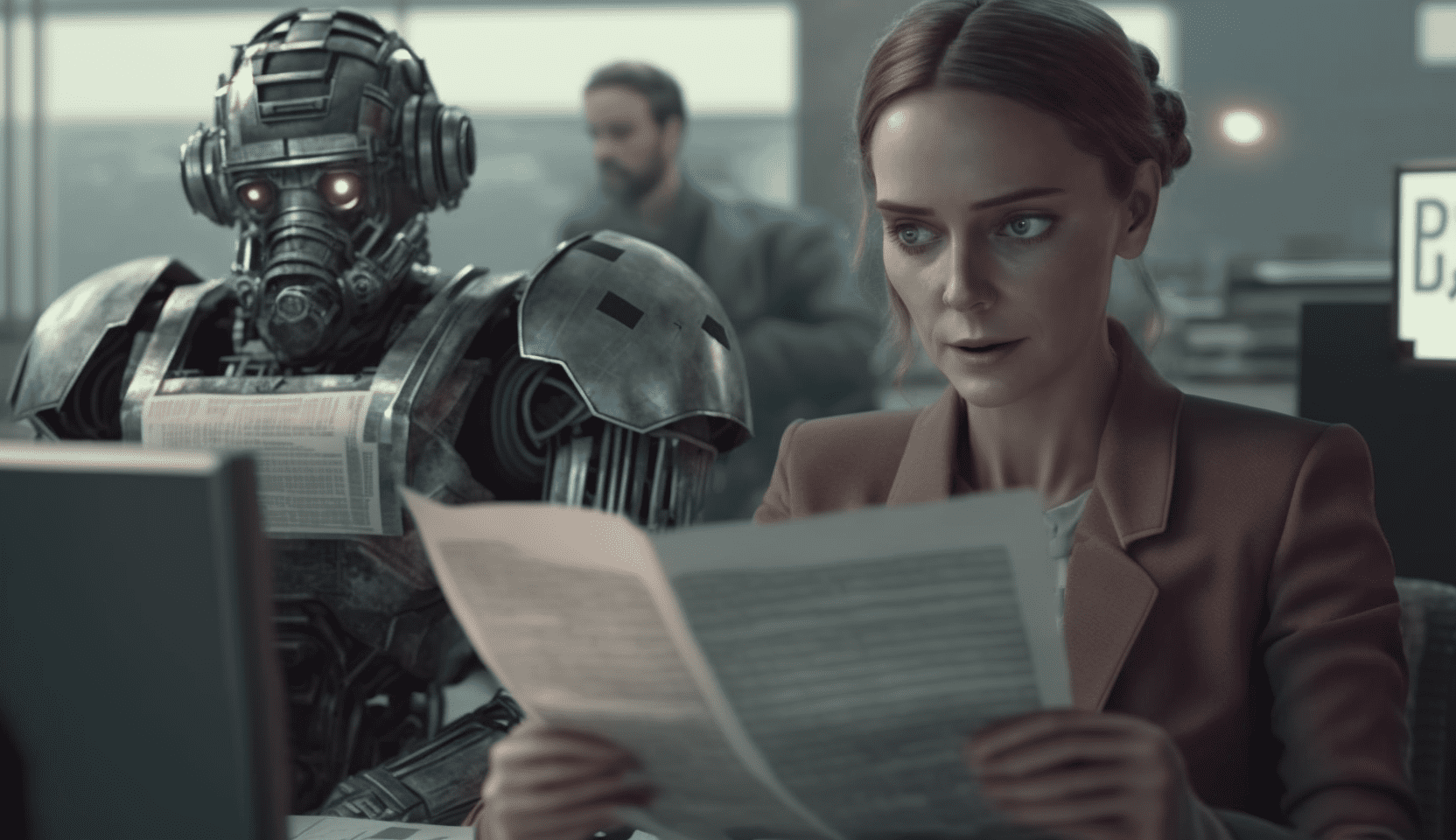

Limitations of Robotics and Data

Robotics is another field where the pursuit of AGI faces significant challenges. A primary limiting factor in robotics is the lack of data compared to other AI domains like natural language processing, which has access to vast amounts of textual data[1]. Robots can only perform very narrowly defined tasks, and even when paired with advanced chatbots, they are unable to achieve much independently.

Chelsea Finn, an assistant professor at Stanford University who leads the Intelligence Through Robotic Interaction at Scale (IRIS) research lab, views the development of more generalised and helpful robots as orthogonal to sentience. She states that the focus should be on creating robots that can perform useful tasks rather than aiming for sentience. While robotics research continues to explore fascinating ways to make robots more generalised and better at learning, advanced robots are far from interacting spontaneously with Earth or possessing human-like intelligence.

Focusing on Real-World Harms

While AGI remains a divisive and controversial topic within the AI community, it is essential not to overlook the real-world harms that AI systems can cause. Suresh Venkatasubramanian, a professor at Brown University, points out that focusing on futuristic fears can distract from the tangible present. AI can worsen economic inequality, perpetuate racist stereotypes, and diminish our ability to identify authentic media. Addressing these issues is vital as we continue to explore the potential of AGI.

In conclusion, AGI remains an enigma, with experts divided on its feasibility and meaning. While some researchers and prominent figures envision a future with human-like AI and superhuman intelligence, others express scepticism and doubt. As the pursuit of AGI gains momentum, driven by recent AI successes and ambitious mission statements from leading AI labs, the debate over its possibility and implications will continue to evolve.

Sources Laio used to write this article:

– https://www.wired.com/story/what-is-artificial-general-intelligence-agi-explained/

– https://arxiv.org/abs/2303.15935

– https://www.nature.com/articles/s41599-020-0494-4