Lately, AI has been an unavoidable topic in cultural, political and online conversations. There is no way around it, whether it is about new tools, Elon Musk addressing the technology and suggesting a temporary halt to its development, or Italy banning ChatGPT in the whole country. It’s new, we think we understand it, but not many actually grasp it completely. Also, those who grasp it often disagree.

When it comes to this kind of novel and fast-evolving subjects, indeed, regulation is often interdisciplinary. It concerns many fields, and those fields are not immediate, straightforward, or scalable to a one-fits-all solution. In the case of AI, there are many variables to consider: decision-making, bias, privacy, and, of course, governance. Before coming up with practical solutions and, therefore, even before asking what the regulatory policies should look like, we should ask who should write them up.

What does this mean?

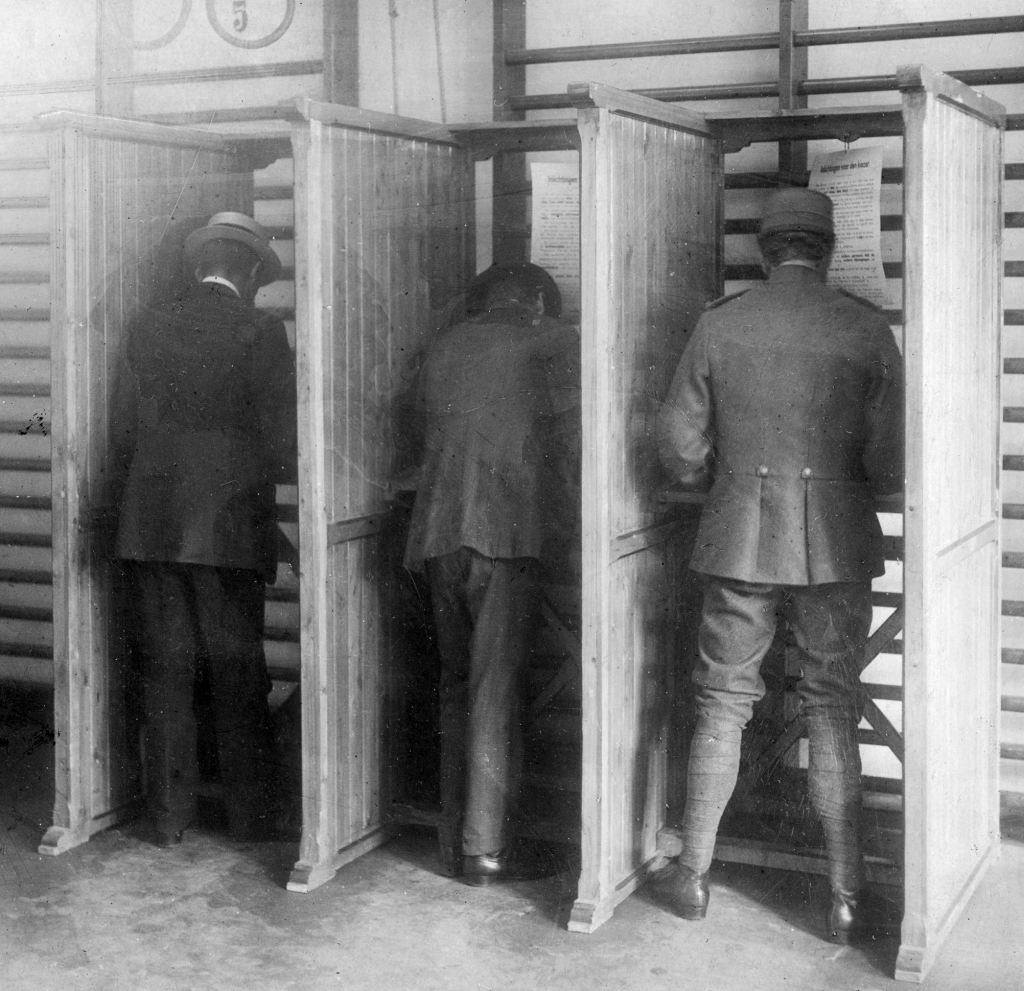

It means that the issue of who should be appointed is pretty controversial. As AI becomes a more and more present element in our daily lives, there are many possibilities for implementing regulatory policies, and the discussion could go on for days. For this article, let’s consider two: a fully democratic process and a careful curation of a task force of experts.

For the first case, people might want to have a say in how we should limit AI’s reach, development, and application. Nevertheless, on the one hand, democratization may seem the most direct and fruitful approach, where many compelling points could be addressed through a public vote, with the risk of non-expert opinion influencing sub-optimal or way too politicized outcomes. On the other, the need for experts to redact an ethical code seems like the most reliable approach to some. Still, here the risk may be to devolve power over such a fundamental tool to a restricted number of people and, in so doing, forge some kind of “intellectual oligarchy.”

Education, governance and regulation

To try to make sense of possible outcomes, Innovation Origins spoke to three professionals working closely with AI. The first is Dr. Sara Mancini, a Senior Manager at an Italian consulting firm, who made the 2023 List of the 100 Brilliant Women in AI Ethics. In her opinion, before even starting to talk about governance, when it comes to AI, there is an even earlier step that needs to be taken into account: education.

“The ethical question which concerns me the most is about the interaction between people and AI.” Dr. Mancini says, “We are going towards a scenario where the required competences are not only technological but critical thinking as well, even for those not in this field of interest. Therefore, people need to get a proper education in AI from a very young age so that everyone can access the technology in a mindful way”.

Dr. Mancini thinks this perspective could eventually lead to the productive use of Artificial Intelligence, levelling the playing field across society and avoiding a probable situation where only those who can access AI tools can also understand and benefit from it. Also, the approach would enable a relationship with AI based on “augmentation” rather than “automation.” This is to say that people would learn how to use AI consciously, for assistance and facilitation in different areas, instead of completely “taking over” tasks and making humans reliant on it for such tasks.

However, in the short term, she believes that experts should draft regulatory policies. She explains that consultative methods, where companies and academia discuss regulation, are already in place across Europe and the United States to face the problem’s urgency.

The business side of things

Naturally, since it’s primarily businesses that are now developing, controlling, and distributing AI, the conversation around regulation has strong effects in that world too. AI Governance Limited Chief Executive Sue Turner, OBE, knows more than a thing or two about how to run a successful enterprise. She is also part of the aforementioned 100 Brilliant Women in AI Ethics list. On the regulatory front, she believes in a need for a dual approach: both “top-down” and “bottom-up.” In these terms, she says we should consider the framework that politicians are currently establishing and how these fit with social norms that change in society as people’s views of AI evolve. However, she says that: “Right now the area is moving so fast that we are neither being led by the top down approach nor seeing the influence of the bottom-up approach”.

Just like Dr. Mancini believes in education as a powerful method to get people closer to an understanding of AI and, in turn, its ethics, education, in this regard, is necessary for adults too and especially those involved in business featuring AI as one of the primary components of their model. “[Many business leaders] don’t even know how to identify the sorts of questions they should be asking. That’s the biggest challenge: how do you get the knowledge into these leaders about what they should be aware of?”.

Transparency and decentralzation

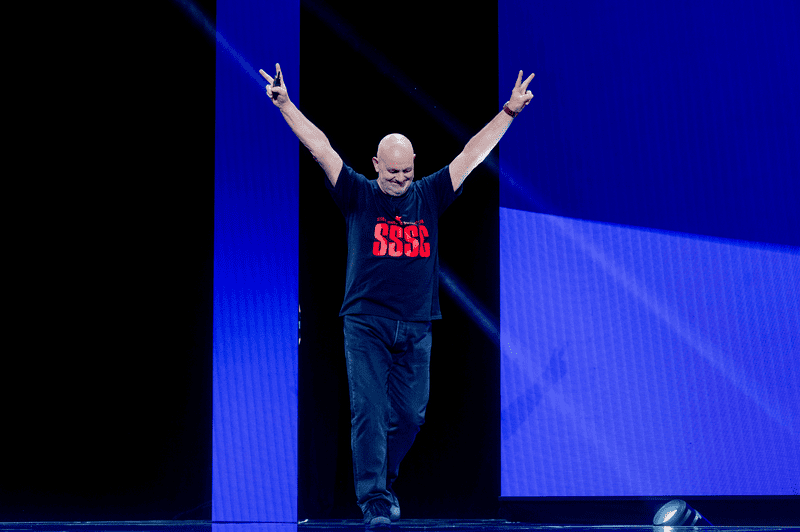

However, some people already have that knowledge and are thinking about using AI ethically, with a critical look towards the outside. Buster Franken is the CEO of FruitPunchAI, a challenge-based learning start-up that operates in the field of skill accreditation. He has some strong and well-founded opinions on governance and believes decentralization is one viable answer to the issue.

“Moral and legal codes should be decided in a democratic way, as they are now. This is not AI specific. To govern AI the following things also need to happen: open-sourcing all models, and preferably data, and financially incentivising the finding of ‘flawed’ (biased) models. This will automatically give rise to companies who specialize in testing for those biased models. What specific laws mean when applied to AI can be determined by experts. It’s important,” he continues, “that they don’t have the lead, this’d turn into a technocracy”. So, in his opinion, businesses would adhere to one national ethical code, and companies could act as reciprocal watchdogs and maintain control over each other where everyone is incentivized, even financially, to espouse ethical principles that ultimately benefit the public.

What is not arguable, with this state of the art, is that the field is changing, and it’s changing fast. For regulators to keep up with it, we should define regulators first, but we cannot afford to waste too much time on definitions.