Urbanization and globalization have led to increased congestion in transportation systems. Autonomous vehicle technology is more efficient, safer, and more environmentally friendly – and is expected to play an important role in solving this problem. To date, however, autonomous vehicles are still fraught with a number of uncertainties. In addition to technical problems, questions of ethics also arise. After all, the software must be able to deal with unpredictable situations and make the necessary decisions in risky situations. This is a task that corresponding algorithms are supposed to take over. Initial philosophical approaches were valuable but insufficient. Now an algorithm based on risk ethics could bring a viable solution.

The Trolley Problem

Initial approaches to autonomous vehicle decisions on the road were conceived according to traditional ethical patterns of thought. An either/or maxim followed from this, but it led to significant difficulties. This is because expected benefits and expected risks are usually asymmetrically distributed – or disputed – between the parties involved. So, in many traffic situations, an ethical principle had to be violated.

The trolley problem, which goes back to Philippa Foot (1967) and was also described by Wendell Wallach and Colin Allen in 2009 in Moral Machines, serves to illustrate this problem. The scenario used is tracks on which there are people whom the train is about to run over. The train is programmed to stop immediately if there are people on the tracks. However, it becomes more difficult when the train can only swerve and has to decide whether to run over a smaller or larger gathering of people on one of the two tracks, because human lives cannot be summed up.

Risk distribution via trajectory planning

Franziska Poszler of the Chair of Business Ethics at the Technical University of Munich (TUM) notes that the trolley problem focuses purely on accident scenarios. But that falls short, she says because autonomous vehicles do not face decisions only when there is a risk of an accident. They make decisions on an ongoing basis. For example, how much distance to keep from other vehicles and road users – and when to brake. “These decisions also carry a normative content. Because implicitly, trajectory planning is used to decide on whom to impose how much risk,” says the business ethicist, who conducted research in the interdisciplinary ANDRE project together with colleagues from the Department of Automotive Engineering at the Institute for Ethics in Artificial Intelligence (IEAI) at TUM. The paper was published in the journal Nature Machine Intelligence.

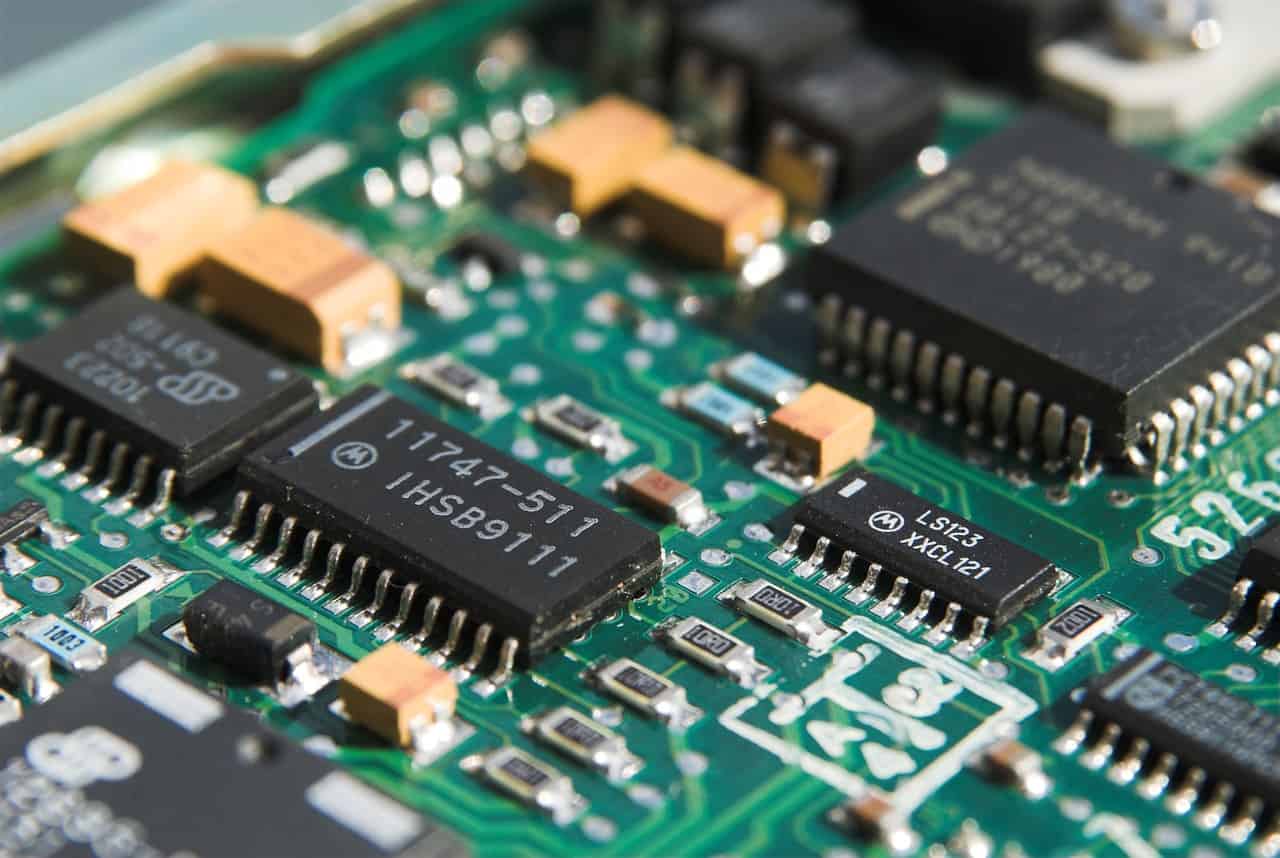

Trajectories also go by the names trajectory curve, path, or road. They are generated with the help of map- or sensor-based data and serve as a target variable for downstream control of the autonomous vehicle. Among other things, the feasibility and collision-free nature of the trajectory must be taken into account.

Institute for Ethics in Artificial Intelligence

Coding ethical rules

The ANDRE project explored the question of how far ethical behavior can be integrated into the path and behavior planning of an autonomous vehicle. To explore this, it was necessary to combine two different disciplines – namely algorithmics and philosophy. This is because “the algorithm had to be built in such a way that it allowed for the possibility of considering different ethical principles. At the same time, the ethical principles had to be selected and formulated in such a way that they could be programmed,” explains Maximilian Geißlinger, a researcher at the Department of Automotive Engineering.

Probability models

The mathematical implementation of the various ethical principles was carried out from the perspective of risk ethics. In this, the risk is defined as the product of harm and probability. From this follows the formula risk = harm × probability. Vice versa, models based on probabilities are also envisaged in trajectory planning when it comes to representing the behavior of human drivers. This is the interface at which the researchers in the ANDRE project started. Working with probabilities allowed them to make more sophisticated trade-offs and create an algorithm that fairly distributes risk on the road.

Risk potential and risk appetite

Risk assessment was based on a classification of road users. Central criteria were the risk they pose and their individual risk tolerance. A truck, for example, can cause great damage to other road users, but in many scenarios can only be damaged to a minor extent. The opposite is true for a bicycle.

The algorithm was given principles and theories from risk ethics and current ethics guidelines. Among other things, a maximum acceptable risk should not be exceeded in various traffic situations and the overall risk should be minimized. Furthermore, the research team calculated variables resulting from the responsibility of the road users. It can be assumed, for example, that they will obey traffic rules.

The problem with previous approaches was that critical situations on the road were only handled with a small number of possible maneuvers. When in doubt, the vehicle simply stopped. The risk assessment now introduced into the code creates more degrees of freedom with less risk for everyone. It’s no longer a matter of either/or – rather, a trade-off takes place that includes many options.

Priority for the weakest

Here’s an example: an autonomous vehicle wants to overtake a bicycle, but a truck is coming toward it in the opposite lane. Can the bike be overtaken without driving into the oncoming lane and at the same time keeping enough distance from the bike? What is the risk to which vehicle and what risk do these vehicles pose to you? Scenarios that the software analyzes in a fraction of a second based on all available data about the environment and the individual participants.

When in doubt, the autonomous vehicle with the new software will always wait until the risk is acceptable to everyone. Aggressive maneuvers are avoided, and the autonomous vehicle neither goes into a state of shock nor brakes abruptly.

According to TUM, this is the first algorithm to take into account the EU Commission 2020 ethics recommendations.

Plea for constructive discourse

The research project was based on tests of around 2,000 scenarios with critical traffic situations – distributed across different road types and areas such as Europe, the USA, and China. The data came from Common Road. The newly developed algorithms were analyzed in simulation in known and newly defined driving scenarios. The software will also be tested on the road in the future using TUM’s EDGAR research vehicle. The code, which incorporates the findings of the research work, is available in open source.

Based on the first promising results, the researchers plead for a reorientation of the research discourse. The latter had recently focused on the trolley problem, which, however, only showed the problem and not a solution. However, their way of thinking, based on risk ethics, allows for a constructive approach. Whereby the researchers emphasize that it is initially only a proposal that needs to be discussed further. In addition, further differentiations, such as cultural differences in ethical decisions, would have to be taken into account in the future.

Photo above:

The Mercedes-Benz prototype, Future Truck 2025, also drove autonomously in column traffic on the A14 autobahn near Magdeburg. This is autonomy level (level)-3 autonomy, as the vehicle could also undertake maneuvers such as lane changes on its own and the driver did not have to permanently monitor the journey. (c) Wikipedia Common – Michael KR