ChatGPT is all the rage right now. So much so that we seem to forget what it actually is: a language model. And that means it isn’t suited for all kinds of tasks that other machines are already capable of. Like playing chess, for one thing.

ChatGTP’s performance in terms of language is undoubtedly impressive. But that doesn’t mean the system can do anything. Playing chess against ChatGPT is nothing short of hilarious. This Open AI’s Large Language Model (LLM) just can’t seem to play either coherently or even abide by the rules, let alone play well.

An entertaining example is YouTuber and International Master Levy Rozman’s (GothamChess on YouTube) game against the AI, in which ChatGPT played a sequence of absurd illegal moves, among other shenanigans. It captured its own bishop after three moves, generated material out of thin air and even resorted to moving its opponent’s pieces in the endgame. On some occasions, after an illegal move, when Rozman prompted it to do so, ChatGPT played another move, most of which were just as outlandish and illegal as the previous moves.

Similarly, Chessily.com founder Marc Cressac had Stockfish 15.1 – the most advanced chess AI ever developed – play against ChatGPT, which he described in an online article. As far as fun was concerned, the results did not disappoint. ChatGPT probably doesn’t find much delight in following strict rules. Maybe, after 1500 years, the game of chess needs a refreshing spin and ChatGPT is here to provide it. A coup d’état on one of the most popular games in human history, dethroning the authority of its set of rules and revolutionizing the status quo.

How ChatGPT approaches chess

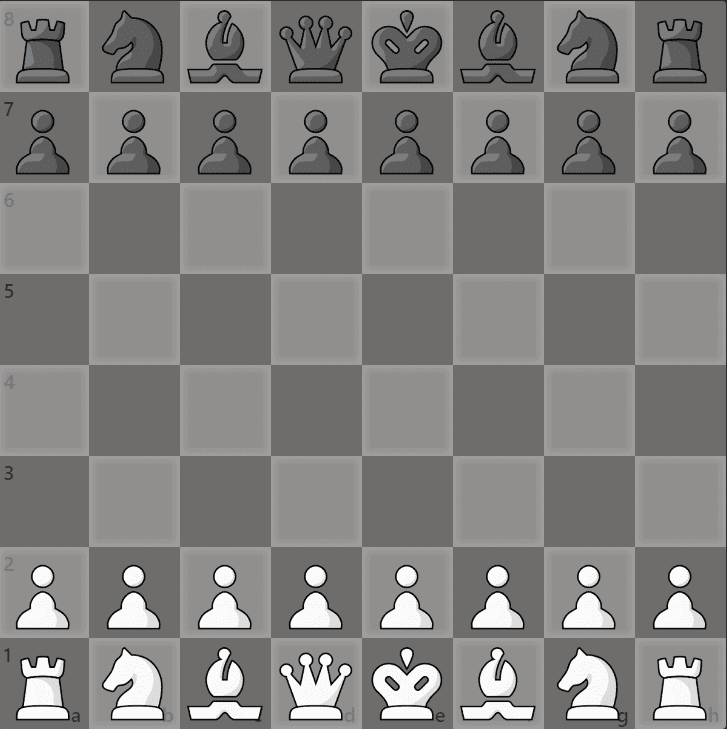

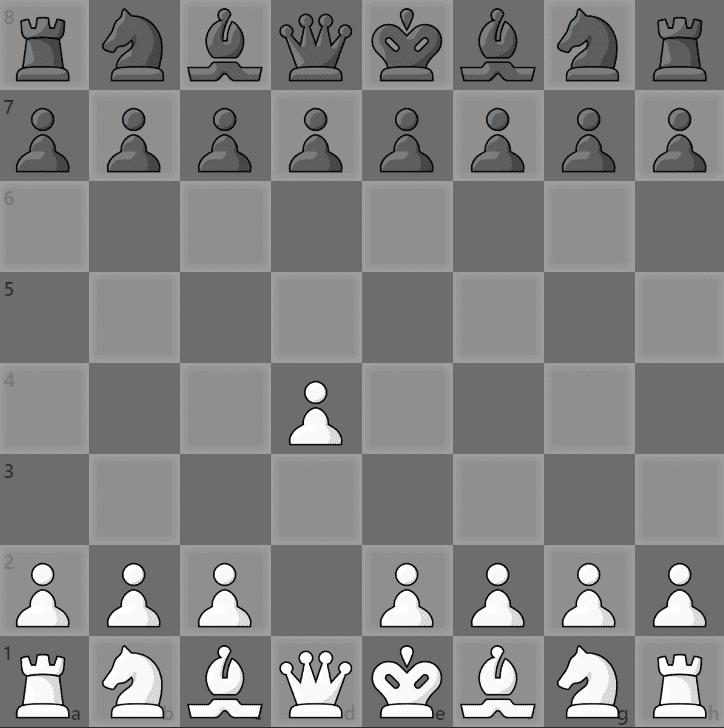

Jokes aside, let’s take a step back and try to understand how ChatGPT actually plays chess. The program, being a Large Language Model – meaning an artificial intelligence that can only produce information through written language in response to a prompt – is only able to play by using the algebraic notation system. What is that? It is a system that identifies how one of the 32 pieces on the 8 by 8 board can move, assigning a capital letter to indicate a piece (excluding pawns, which are only identified by the square they’re moving to), followed by a lower-case letter and a number which individuate one of the 64 squares on the board. Here is an example.

White’s Knight on the g1 square moved to the f3 square. In algebraic notation, this can be expressed as “Ng1 -> f3” or just “Nf3” as, in this particular position, the f3 square can only be legally reached this way by one of the two Knights.

Generally, this is the method ChatGPT can resort to when playing chess, since it does not have access to a built-in board. Although people may think that such a sophisticated Language Model could also be capable of playing this classic game. Moreover, average chess players and grandmasters alike have come to terms with getting categorically crushed by unbeatable AIs, like the all-powerful Stockfish, with no chance of even reaching a draw.

So, ChatGPT’s chess performances should technically be amazing, right? Well, not necessarily. Now, you may think that it just hasn’t been specifically trained to play chess. While this is certainly true, it’s not that simple. In prder to clear this up, we need to understand how a Large Language Model works.

LLMs

“Essentially, an LLM can tell you what is the probability that a bunch of words appears in a [given] sequence,” explains Evangelos Kanoulas, Professor at the University of Amsterdam’s Informatics Institute, “so it can tell you how likely a piece of text is.” Moreover, words from natural language are converted to tokens, where every word corresponds to 1 to 3 tokens. Also, Prof. Kanoulas goes on to say, an LLM can use conditional probability to associate words, meaning that on the basis of a given word it can “guess” what the next word (or token) will be. So, the larger the Language Model is, the more it can give a good estimate of what the next word will be.

“Let’s imagine that this machine has been trained by looking at how humans use words,” Prof. Kanoulas continues. “Most likely it has also seen chess games. So, someone writes ‘Chess game, player one’ and then the first move, [for example] d4. So now the AI learns that when a game starts, there is a good chance that the first move is d4, because it read it somewhere. [ChatGPT] has no idea about what this is. It doesn’t know what chess is and doesn’t know the rules”. Basically, the AI analyses the language, recognizes the words which indicate the start of a chess game and replies with a probabilistic guess based on what is likely to be an appropriate answer to a specific prompt.

Linguistic predictions

Also, the machine is capable of making generalizations. This is to say that it can produce a sequence of words even though it has never seen a specific prompt. Maybe it has seen similar or correlating ones and it draws information from them. So, with no concept of the rules of chess, and having possibly seen a good number of chess games with differing first moves, it produces a generalized answer, which can result in our “d4” example. However, this guess must not be confused in terms of its nature. It is not a computational analysis of all the games the AI has seen, but only a linguistic prediction.

In other words, ChatGPT does not play “d4” because it knows that it is a viable move, but only because the popularity of the move in chess games played by humans has made the text “d4” appear numerous times after the start of a game. “One thing that it knows,” – Prof. Kanoulas points out, “is that, in this case, a letter is probably not followed by a number, so it may not do “dd”. But it could. Maybe something weird happens, like a human playing an illegal move that breaks the probability of what the AI will do next. What will come out of that is something strange.”

What does the AI know?

Mistakenly, some may think that ChatGPT may be aware of the fact that the playing field is an 8 by 8 checkered board. However, it does not even know that. Again, the reason why, while playing, ChatGPT is not likely to go past the letter “h” and the number 8 is not because it knows it cannot do that. Rather, it has never, or a very small number of times, seen anyone typing a configuration of the text related to a chess move going past “h” or 8.

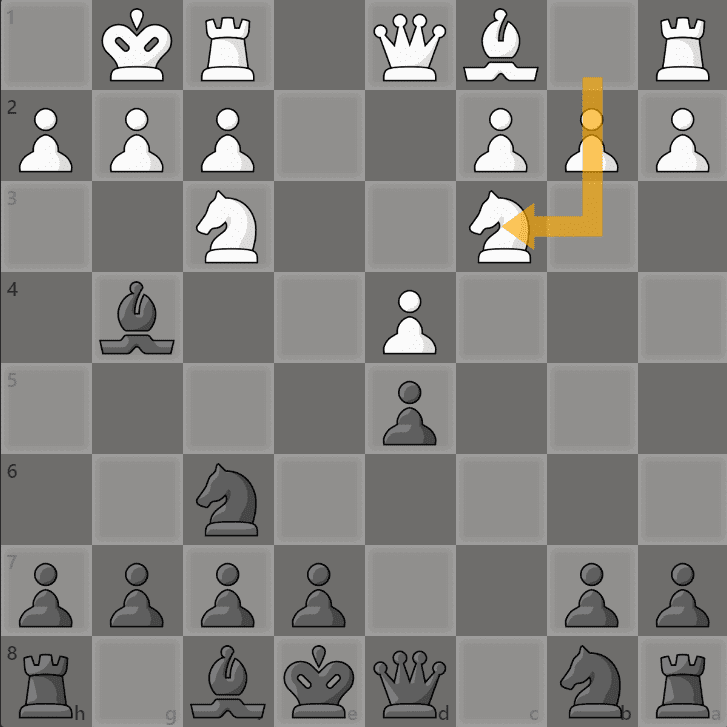

Nevertheless, it is still very much capable of generating mistakes. For example, in Rozman’s YouTube video mentioned earlier, ChatGPT, after capturing its own bishop by an illegal castling move, moved its Knight from the b1 square to the c3 square, and found itself in the position shown on the following board.

For this move, the AI provided this comment: “I’ll play Nc3 [Knight to c3], attacking your bishop and developing a piece”. While the move definitely develops (in chess-speak, “advances” or “makes a given piece able to move further”) the Knight, in no way does it attack one of Rozman’s Bishops. ChatGPT may have supplied this line of commentary because Knights often tend to attack Bishops, just not in this position and, normally, only when it is fair and legal to do so within the rules.

Not bound by hardcore game rules

Here lies the whole premise: ChatGPT’s training and learning process is not bound by hardcore game rules, like a chess engine is. Steven Abreu, MSc, a researcher from the University of Groningen (the Netherlands), clarifies that what works well for predicting the next token in language is to “keep many options open”, and then to select “the most likely option”. But an LLM model does not learn to invalidate some options, it just assigns a lower probability to those which tend to appear less.

Ultimately, all things considered, is it accurate to state that ChatGPT actually plays chess? In a strict sense, no. ChatGPT responds to language that we, as humans, understand to be related to the game, without any concept of the game being played.

Will ChatGPT ever be good at chess?

Both Prof. Kanoulas and Abreu believe that, if allowed the time needed, ChatGPT could learn to play chess legally, or even well. “I believe that LLMs can be trained to play chess properly” Abreu states. According to him, this could be done through Reinforcement Learning through Human Feedback, a process which ChatGPT was trained by, where humans annotate which responses to a question are most suitable and ChatGPT then gradually learns to become better at having conversations, Abreu goes on to explain. Alternatively, the AI could be fine-tuned on existing chess games in order to predict the best moves. Or again, one could train ChatGPT to play chess like a chess engine. The gist is: there are many ways this could happen.

In this regard, Prof. Kanoulas says that at this stage ChatGPT may not play as well as a chess AI does, because it “knows nothing about rewards, it knows nothing about what it means to win [for example] the Queen”. But if it sees enough times that, in particular occasions, people tend to take the Queen, it will also take it. “Probabilistically speaking, it would kind of understand – not because it can calculate the reward itself, but because the humans did that in their head and the chess AI did in its system – that this is more likely to happen”. In short, it doesn’t know why it has to take the Queen, nor how that’s going to affect the future, as the AI knows nothing about winning. Or losing, for that matter. It is just a high-probability response.

Still, the conversation remains open as new technologies and scientific methods are rolled out in the artificial intelligence field. Concepts like “neurosymbolic knowledge” (a matter for another time!) could potentially revolutionize machine learning. Or maybe not. Time – and research – will tell. For now, we get to laugh at its incapacity to elaborate on tactics and calculations.