The week that marks the first anniversary of the start of the war in Ukraine is a historic one. Monday’s visit to Kyiv by United States president Joe Biden was quickly followed by Russia’s president Vladimir Putin’s speech announcing the country’s withdrawal from the START nuclear treaty. Meanwhile, the US and EU countries are strengthening their support to Ukraine by sending tanks and air defense systems. In addition to military equipment, artificial intelligence (AI)-driven systems may also be influencing how the conflict dvelops further.

AI in warfare was indeed the focus of the REAIM conference: Responsible AI in the Military Domain. The two-day summit, co-hosted by the Netherlands and South Korea in the Dutch city of The Hague, included panel discussions on the use of the technology and demos of a range of AI applications. The Ukrainian battlefield finds data processing algorithms and AI-powered drones the main examples of the ways in which computer-assisted intelligence can be deployed.

The impact of AI

Right now, AI’s main impact is the competitive advantage of faster information processing. “The difference that automated technologies like AI deliver is huge. If you have been focusing on this, you are able to outperform countries that have spent US$65 billion a year on defense for years,” Alex Karp underlined during one of the REAIM sessions. He is the CEO of Palantir, a data analytics company whose software is being used by Kyiv for military offensives.

But when it comes to the Russian army, the theory does not match practice, says Samuel Bendett. He is an adviser with CNA. Bendett took part in one of REAIM’s panel sessions to discuss the forthcoming book The AI Wave in Defence Innovation with some of the other co-authors. “Prior to February 2022, Russian military academia was full of logical, interesting, and relevant analysis on the evolution of AI across the world’s military forces. Yet, there is a huge gap between what they say about AI and the war they have ended up fighting.”

In his opinion, military, industrial, and political problems are encumbering Russia’s performance. However, if on the one hand, some of these problems are impacting Moscow’s army, on the other, Russians can solve some others on the fly. “They are aware of the problems that have become very public and of those that they’re unable to fix over the course of this war. At the same time, their information technology sector is capabe of evolving rather quickly. If Ukrainians get somewhere first, Russians will quickly copy them,” explains Bendett.

AI-powered tanks

AI and its associated warfare applications are still in their infancy. Following years of testing and developments, the war in Ukraine is the first actual testbed. If AI analytics is a valuable tool for those fighting wars to plan courses of actions, then other use cases may soon enter the battlefield too.

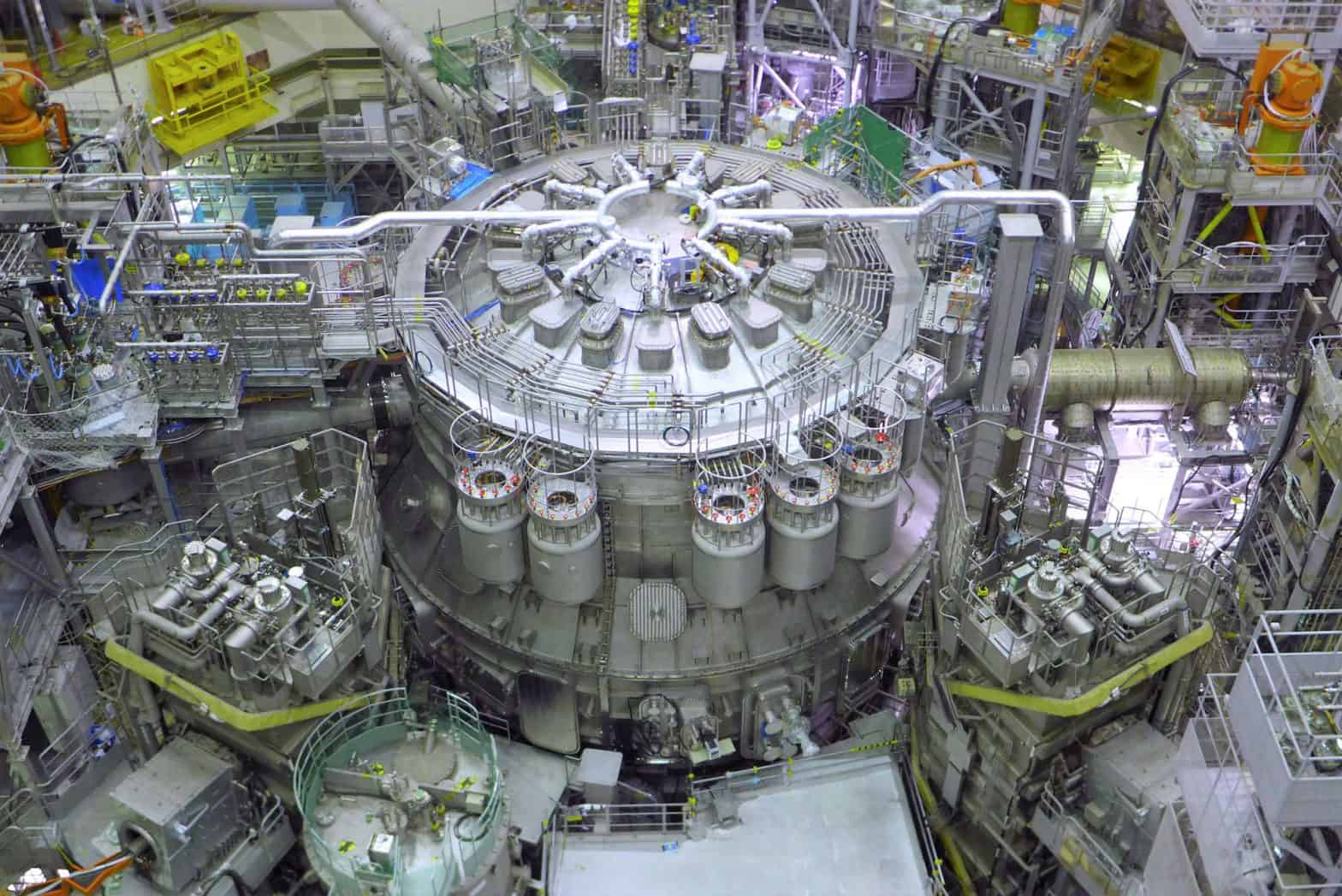

Russia’s former aerospace boss Dmitry Rogozin announced on February 2 the arrival of four Marker robotic tanks in Donbas. Markers are unmanned ground vehicles (UGVs) that resemble tanks. Markers come in four different configurations, namely Reconnaissance, Combat, Guard and Logistics. The combat version can be equipped with antitank-guided missiles, automatic grenade launchers, and machine guns. In addition, it can also carry drones for combat. Most importantly, the robot has advanced movement capabilities and a vision system with AI algorithms that do data processing.

Rogozin announced the start of the trial of the Marker tanks, including the combat version and potentially the AI capabilities. As this is a prototype test, it will probably have a limited impact in the short term, but they will gather relevant information for the future developments of the technology, according to Bendett. “However, the Russian defense industry is not gearing up to field hundreds of UGVs. In the war, they are using remote-controlled systems, deploying them in areas Russia cleared the Ukranian military from. The Markers are intended to be operated in combat zones, and it is very difficult for soldiers and experienced tank commanders to use them in those circumstances. As in the case of all other innovations, this is all about testing.”

Ethics and warfare AI

In another REAIM panel on the impact and regulation of algorithmic warfare, Prof. Dr. Frans Osinga from Leiden University stressed how “AI is not a revolution but a new reality.” As the development is taking place, it also poses pressing ethical concerns. How should these technologies be operated? Who is responsible for targeting and firing missiles with AI-powered machinery? What targets can be included? The list can go on and on.

As with every new reality and development, the conversation has just started. Ethics and regulatory frameworks will take time – and negotiation – before they are up and running. One of the first official contributions to the debate comes from the US Department of State. The government body recently released a declaration on the responsible use of military AI. The statement lists 12 points on how states should approach the use of military AI. In doing so, it stresses abidance to international law and human control over technology.

“It is a start to a discussion and towards transparency. This conversation has to happen between technology leaders such as the US and the countries that often are obliged to follow. This is very fresh and lacks a lot of specifics, which will probably be fleshed out in subsequent discussions,” Bendett concludes.