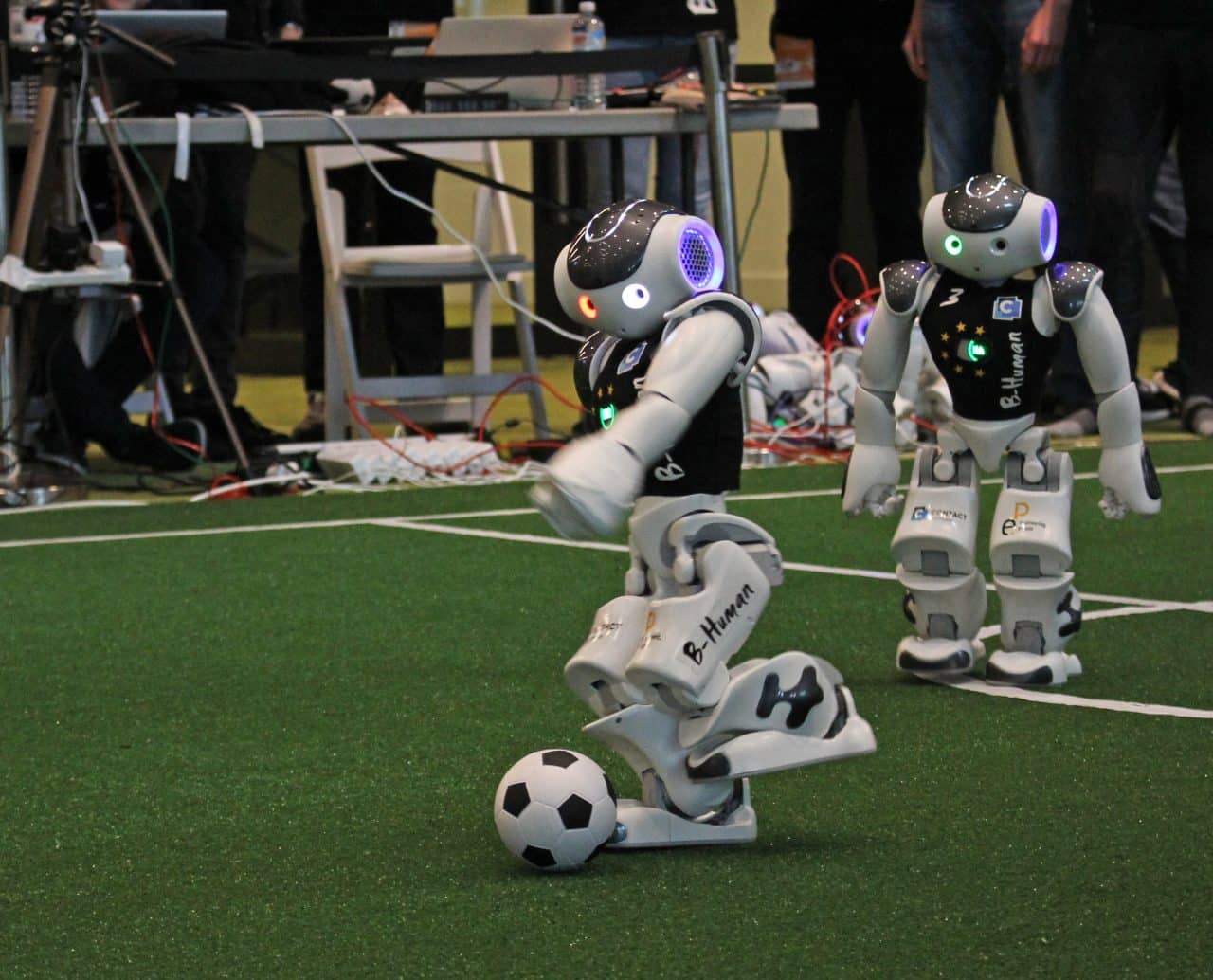

They have three World Cup titles more than the German national football team, but when the Dutch B-Human team arrived in July, there was no crowds of people at Bremen airport. Robot football is not yet prime time on TV, even despite the fact that they are trendsetters in the field of programming and testing. An interview with Tim Laue, one of the leaders of the ruling German World Cup team, about the current challenges facing the RoboCup, the stuff that people are still so much better at than robots, and what Robo-Football has to do with autonomous vehicles.

If you compare the Bundesliga to Robot Football, what is the greatest challenge robots currently face?

Oh, there are many. It all starts with running. Human joints reach much higher speeds. Occasionally highly specialized industrial robots which are able to assemble very specific things can reach such a level. Nowadays, humanoid robots are able to make a lot of movements. For example, our NAOs have six electric motors per leg and consequently a high degree of freedom of movement. At the same time however, you always have an energy and space problem. It is not yet possible to move robotic limbs as finely and reactively as a human being can. But that’s what we need in order to operate such a machine without accidents or to be able to aim for the goal with real force.

Tim Laue

Read more: TU Eindhoven’s soccer robots and care robot win the world championships in Sydney

Do all teams use the same type of robot in your competition?

At the RoboCup we participate in the so-called Standard Platform League (Eindhoven participated in another category – ed.). This is where everyone uses Softbank’s NAO robot – about 60 cm high, five and a half kilos in weight. So what happens there is that we have a software contest based on a single piece of hardware. On the other hand, there is the Humanoid-Adult-Size-League. In this case, the robots may also be 1.50 m high and are built by the teams themselves.

Do experts from various disciplines compete with each other in different competitions?

It is mainly computer scientists in our class who compete with each other, in others it is often electricians or technicians. However, robot football is not very popular within these disciplines in Germany.

Just a coincidence?

In the early part of this century, the German Research Foundation specifically promoted robot football. Throughout Germany there were about a dozen work groups of scientists who were paid full-time to teach robots how to play soccer. This has brought things to the fore on a wide scale. German teams became internationally successful. This is still having an effect to this day, in my opinion.

What about the competition?

The teams participating in the RoboCup are very diverse. The team from Austin, Texas, for example, are all PhD students with a diploma. There are hobby teams or teams that come together on a project basis. This is an official course here in Bremen. The students are able to earn a relatively large amount of credits and invest a lot of time in this course. That helps.

What do the students get out of it, apart from credit points?

On the basis of their experience, they subsequently work in areas such as industrial image processing or on autonomous mobile cars.

Does one have something to do with the other?

As I see it, the RoboCup is a test environment for very complex software. The preconditions are well-defined, but we have no influence on what the other teams do. In order to deal with this situation, we draw on an extensive pool of algorithms, come up with new ones and test whether they work with each other under difficult conditions.

Are the machines on their own during the game?

Absolutely. The five players in the team do everything on their own. The program that is running on the robots must first recognize the state of play – the playing field, its own position, the position of the teammates and opponents, the goals, the ball – and then make the right judgements in all game situations.

There are no predefined step sequences, for example two defensive sequences and synchronous movement within a sequence?

You can specify step sequences, yes. Although, under real conditions, it’s only possible to go two and a half steps forward instead of the planned three, or turning just 80 degrees is possible instead of the planned 90 degrees.

Can’t even basic movements be precisely carried out by this type of robot?

There may be discrepancies. The robot should notice this and correct the rotation as required. Many thousands of blocks of source code, for example, revolve around the question of the precise location of the robot.

This is rather a small matter for human beings. I immediately know where I am on the playing field, where the goals are, etc. I can also see where the robot is on the field. Our robots first have to orientate themselves. They have two video cameras, one on the chin and one on the forehead. There are 60 camera images per second which we all want to be able to evaluate. This means that we look at each video image to see if there is a sideline, a center circle or a penalty kick area. The robot uses these to calculate its own position.

The machines are not using a GPS signal? All this is done via the video data generated in the field?

Correct.

Your team has now become the world champions seven times. What are your robots better at than the others?

In general, we are able to do everything better than our opponents. Or, to put it the other way around, if our robots had not mastered one of the many tasks – such as ball recognition, or if they fell more often than their competitors – then these errors would have only increased over the course of the game, and in all likelihood we would have lost. Robust algorithms for all of the tasks seem to be our most important competitive advantage. As well as that, we are often praised by other teams for the fact that our five teams play tactically extremely well together.

So teamwork is crucial at Robo-football?

Absolutely. This year we actually performed a lot of passes. In order to do that, the other player has to place itself in a position where it can be played to in the first place. In return, the player with the ball must decide whether to kick, dribble or pass the ball itself. This has worked out well for us in many cases.

It was once the case that the robot with the ball stood directly in front of the opponent’s goal. Instead of kicking, it passed the ball to the nearest player.

Do you review the data after a game and do you then improve the operating procedures?

Each robot records everything that happens 60 times per second; the images taken, what has been detected, what decisions it has made, and so on. This results in several gigabytes of data per robot and game. During the game, we record the times when something stupid happens and check it out later on.

Let’s talk about that absurd pass. What was that about?

In that particular case, the calculations showed that the direct path to the goal was not free. This could be because the robot recognized a second opponent who wasn’t even there. Let’s say it recognizes an opponent, looks the other way to locate the ball, looks again and recognizes a second opponent – who is in fact the first one, but who has in the meantime moved on. Then suddenly there is no more chance for a shot at the goal.

Technical problems with the sensor?

As a human being I am able to build a mental map of my surroundings. Even though the ball rolls past me and I don’t see it anymore, I still have an idea where the ball is probably located. Robots do something similar. They calculate their own movements and remember that there was something there, even though they are unable to see it at that moment. The longer they don’t look, the rougher their assessment of any situation beyond them becomes. The fact that the robots all look the same doesn’t make it any easier.

The robots are not sending out a friend or foe signal?

There are numbers on it. The human referee can easily recognize these numbers. Theoretically, our software would also be able to use the numbers. But in practice it’s not that easy and we haven’t put any work into that as yet.

Do they communicate with each other?

The robots can share data via WLAN. Each team robot sends the fixed coordinates every second to the other players; where am I, where do I think I am, where am I, where are you, where is the ball, etc.? Each robot then builds its own map of the state of the game.

Important issues when it comes to autonomous mobility: knowing where other road users are located, what are the environmental conditions, how fast are the other road users moving …

Each autonomous vehicle measures its environment with a number of sensors and builds its own configuration from its immediate surroundings. Otherwise, it would very quickly become very dangerous.

The playing field is a great test environment in this respect ….

Our disadvantage when it comes to robots is that many sensors which are used in cars would be too heavy. The cameras weigh only a few grams. The bigger and heavier the robot, the higher the energy consumption and the greater the impact it will have if it falls. The humanoid robot needs energy to stand. In comparison, people are impressively efficient.

It’s nice to see that people can do something better than a robot for a change.

After thousands of years of evolution, people are already quite optimized, which means that I can move a weight of about 80 kilograms for quite a long time and do that with a relatively low energy supply. Some water and a sandwich, that’s plenty for several hours. Today’s robots are still a long way off from this.

@Fotos: University of Bremen/Tim Laue

The second part of this interview deals with how robots detect and process their environment.