This is the second part of the previously published story about what the industry could learn from football robots.

A goalkeeper has to prevent a shot at goal from hitting the net, which is not very different in robot football. By collecting different data through the sensors, the goalkeeper estimates how best to stop the ball, all this is done entirely automatically.

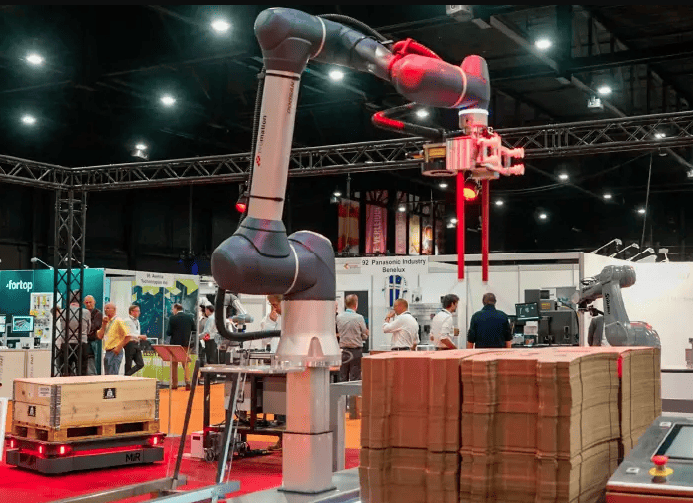

Also, in the industry more and more gets done automatically. Robots take on more tasks: milking cows, cleaning large industrial halls, processing luggage at airports, inspecting dangerous environments, and there are several more examples to give. But many of these robots still lack an open worldview. As a result, the robots are often unable to react properly to, for example, an escaped cow that is supposed to be in the cowshed. Robots get stuck and need help, which takes a lot of time. It is precisely this flexibility that football robots – and also care robots – from the Robocup competitions have to respond to unexpected situations that is sometimes lacking in the industry.

The TU/e, in cooperation with five companies (Lely Industries, Vanderlande, ExRobotics, Diversey and Rademaker), is investigating how industrial robots can benefit from the same flexibility as football robots. Various industries are coming together in this project to develop a ‘world map’ for robots that they can update themselves with collected data. The goal is that the technique can be used in every robot, regardless of whether it feeds cows or scrubs halls. For research, the consortium has 1.5 million euros at its disposal for a period of four years. Three research groups within the TU/e are involved in this. In addition to investments by High Tech Systems Center (TU/e) and the participating companies, the amount was supplemented by a subsidy from Top Sector HTSM (TKI). The project has now been in progress for almost nine months. At the end of the year, the consortium hopes to present the first prudent results.

Jesse Scholtes leads this project from High Tech System Center (HTSC) of the TU/e and explains: “We have not yet reached the point where we have developed universal software that works on every robot. The first step is to implement the sensory technology and link it to the map that is programmed. Ultimately, you want to get to a point where a robot can use everything it sees, hears and scans to improve the map of its surroundings. This will enable the system to respond more adequately to a given situation.”

Take a car factory: “A classic robot produces one car line, the system is pre-programmed so that each component ends up in a fixed location. New systems must be able to produce multiple car types at the same time by recognizing which part belongs to which model,” says Scholtes. “For this, we have to move away from a pre-programmed environment, the more variation in the line, the higher the development costs will be. There is a limit to scalability due to increasing complexity. We can make this manageable by creating an open worldview, in which robots recognise things and understand how to respond to them.” Scholtes sees that developments in this area are coming fast: cheaper sensors and more powerful computers. According to him, this makes systems able to map out the environment better, the next step is to understand it. So that cleaning robots, for example, take into account cleaners who are working around the robot.

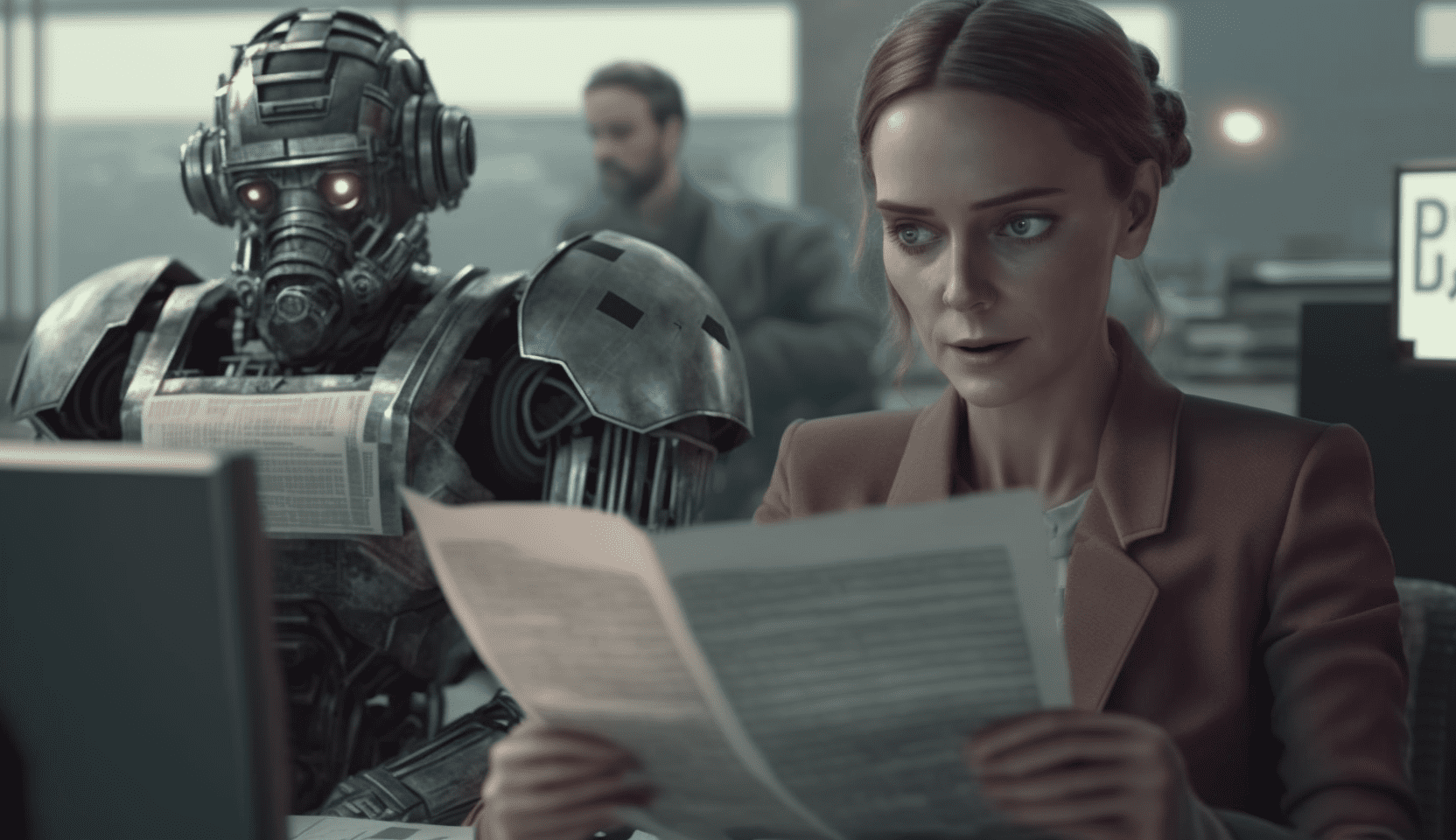

“Or this cup of coffee”, Scholtes raises his almost empty coffee. “A robot must be able to see that this is plastic, that has different properties than a mug. Squeezing a plastic cup hard is a bad idea and lifting a full cup costs more power than lifting an empty cup. The aim is for the robot to be able to do something with all the information it receives. This information comes mainly from the sensors in the system itself, but it must also be possible for robots to ask people for explanations. Everything so that they can collect more data to get a better picture of a situation. Now you could say that robots are ‘blind’. It is difficult to distinguish between objects. But interaction with people is also an important part of this. How does such a robot react to children playing in a departure hall at the airport?”

This is also confirmed by Iwan de Waard, who is the general manager of ExRobotics. His company develops robots that inspect locations where toxic substances are present. According to the company they are the only one in the world that can produce robots according to the ATEX standard. “Ideally, you want to move to a kind of language in which inspection robots can show human inspectors that they have seen and taken account of man. Now you see that people often doubt: has the robot seen me? Is he adjusting his speed? Can I even communicate with a device? People are very good at this among themselves, look at motorists and cyclists: they give non-verbal signals so that they can understand each other. That must also be possible between robots and people. We are not a software company, so developing something like that is extremely difficult for us. This kind of collaboration is important to us, with a relatively small financial commitment we work together with a good research institution such as Eindhoven University and with four commercial partners.”

According to Scholtes, there is another reason why this form of collaboration is good for the industry: “Subsidies regularly come from the EU, and you work together with institutes from all sorts of countries. But after such a project the contact is usually diluted, the distances are literally too vast. The short lines of communication enable us, together with industry, to contribute to the ecosystem. You learn to think of situations that you hadn’t taken into account beforehand. It is not the case that the companies give money and then sit back, they are also actively involved and invest a great deal of time. This dynamic makes co-located research so interesting. With each company, we are working on our own use case. But for the long term, we are working towards the same goal: robots that understand the world better.”