Just ask Siri to put a reminder in your phone while Netflix presents your movies based on previous choices. Or your thermostat will heat up the living room when you leave work. All relatively innocent examples of the use of artificial intelligence. In healthcare, AI is now used to recognize tumours on CT scans or for a virtual nurse such as Molly. In the financial sector, credit card companies carry out fraud checks and banks also use algorithms to determine whether someone can get a loan. AI is everywhere.

AI does not work without data, what happens to all this data? Are municipalities free to use all the data they collect about you just like that? In this story, we looked into it.

How should we deal with this? Should we just let it happen or draw a line? And if that happens, who sets this boundary? And who supervises all the new things that are made?

Microsoft

In a blog post by Brad Smit, Microsoft’s president and head of legal, he advocates rules and laws for facial recognition technology. According to the Microsoft chief executive, this technology has all kinds of dangers. From bias -prejudice- of algorithms to violating privacy or governments that can keep an eye on every citizen. According to him, it is about time that governments started to concern themselves with this.

The European Union

The European Union has a group of 52 experts, people from all kinds of sectors, who have to give advice on what regulations concerning artificial intelligence should look like. They have been working on this since December and the first version of the guidelines for reliable AI has been completed. These include ethical dilemmas that need to be addressed during the design process. It contains things like: people must come first, special attention must be paid to vulnerable groups and minorities. Also, situations in which inequality of power or access to information can occur, such as contacts between companies and consumers or employees. According to this document, more attention should also be paid to the control of artificial intelligence by means of a checklist afterwards. But because it is such a changing subject, it should not be the case that boxes are ticked off. The final version of these guidelines should be ready in March.

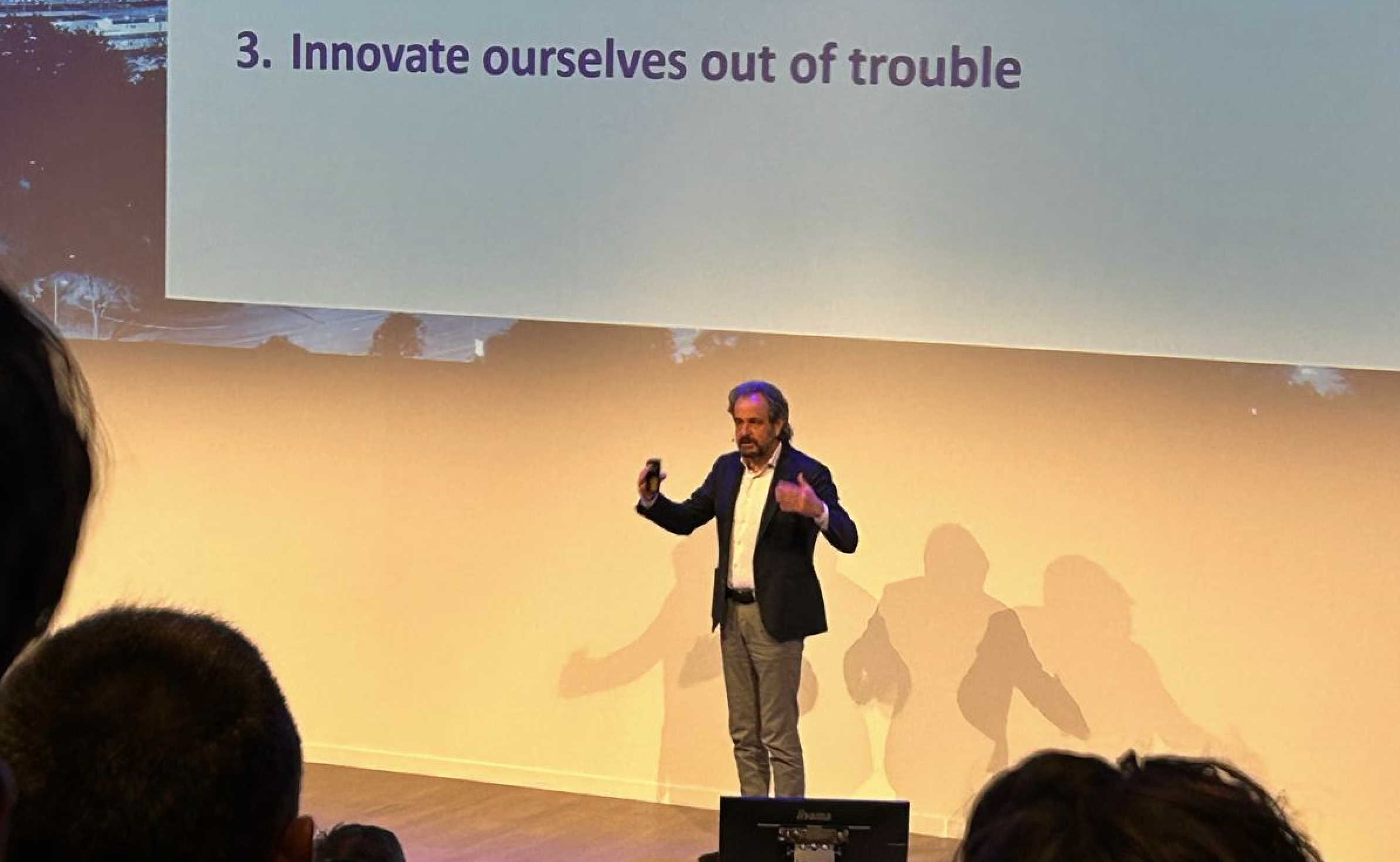

ISO

“It is incredibly important that we all speak the same language,” says Jana Lingenfelder. Besides working for IBM, she is working on an AI standard for ISO. The International Standardisation Organisation (ISO) is an organisation where international standards are developed for all kinds of markets and industries. “An international standard in which everyone uses the same definitions and basic rules that designers and companies can refer to. AI goes beyond national borders, which is why it is good that the EU is working on this and drawing up guidelines.”

Jan Schallaböck, a German lawyer, focuses mainly on privacy. At ISO, he helps to think about a standard for tech companies, among others, where they have to deal with the privacy of the user at the earliest possible stage of their product or service.

He agrees with Lingenfelder, but he sees problems in complying with these rules: “Keeping a close eye on artificial intelligence takes time, many governments or other supervisory bodies do not have this capacity. Moreover, to what extent are they able to estimate how an algorithm makes a decision? For many experts, that is quite a job. Not to mention responsibility and liability. Will this be the responsibility of the users? Of the platforms? Or the people who develop AI? And how do you ensure that an algorithm makes an ethical decision?”

Ethical or numerical?

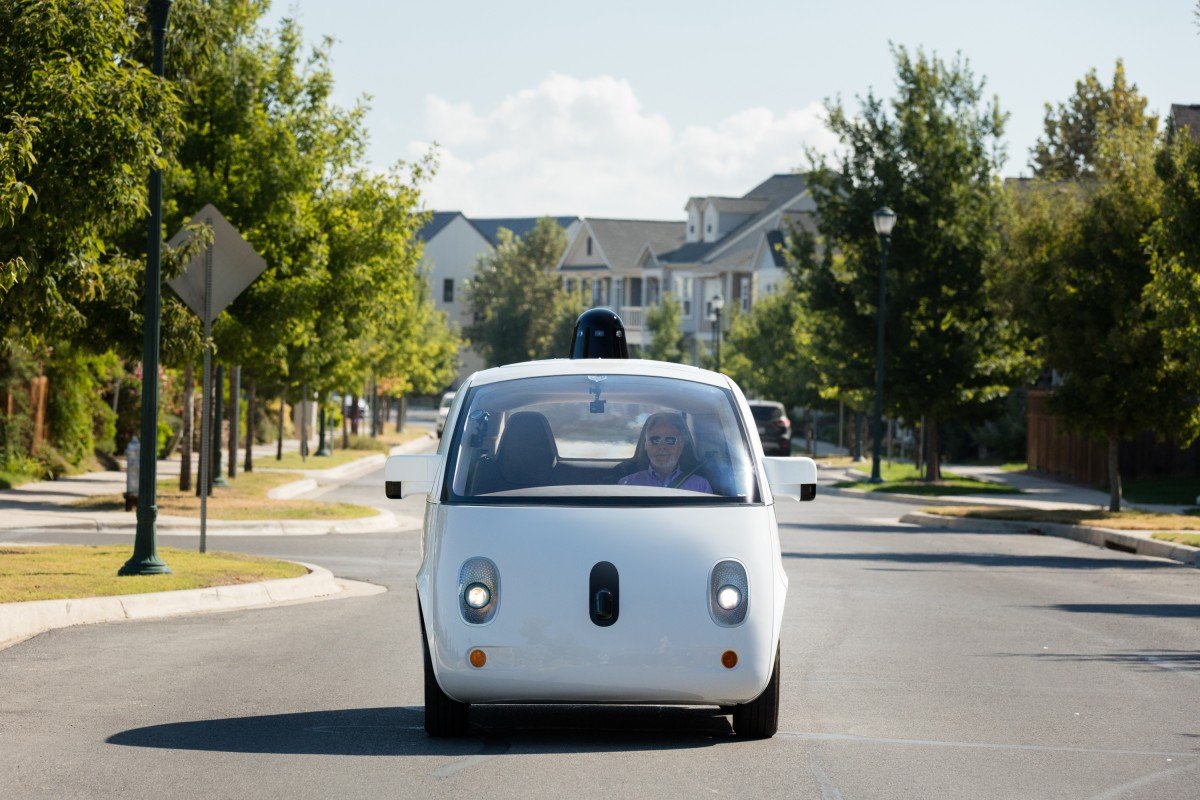

A self-propelled car that has to choose between hitting a cyclist or a pedestrian during a collision. Such things must be built into systems. According to Christian Wagner, there is a common misconception here. Artificial intelligence cannot make ethical decisions at all. Wagner teaches at the University of Nottingham and is director of LUCID, where they are involved in research to better understand, record and reason uncertain data. According to him, strong AI is often spoken of, where technology, like mankind, can judge about right and wrong. “But we are far from that. First of all, we have to make sure that systems are stable. A self-propelled car should not suddenly do unexpected things. That works with numbers and probability calculations.”

He does think it is good to set standards and guidelines, but he doubts whether such a model can be kept: “Technology develops very quickly and designing a new model every time is very difficult. Do you have to create a new model for supervisors every time? Schallaböck thinks this is a difficult issue: “Standards like the ISO makes are always good, but do you have to create a new standard for every application of AI? These procedures are time-consuming and very expensive.

Then there is the question of who these supervisors are because as Lingenfelder already sees, AI goes beyond local borders and has an international impact at all levels of society. The three do not yet have a clear answer to this question. Perhaps an EU body to be set up to monitor compliance with the rules? Schallaböck: “We now have the GDPR, but that is far from enough. We do not yet have an answer to the dangers of AI. It is a danger not only to democracy but also to the rights of individuals.”

Photo: Waymo