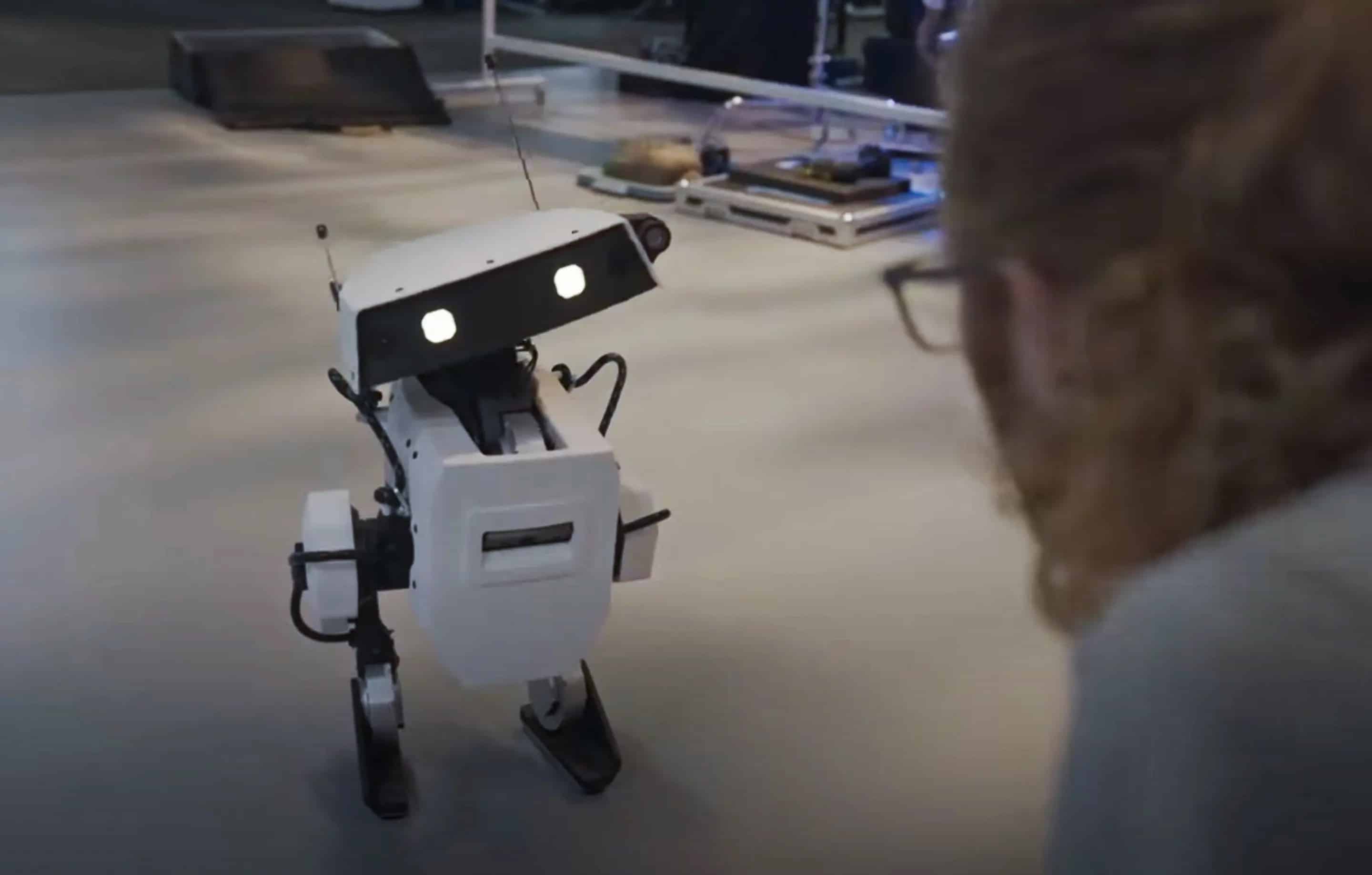

Robots have eyes, arms and can react to stimuli. In movies, as well as in real-life applications, they are designed with human features, making them more similar to us. But does it make sense to include anthropomorphisms in all of them?

A study in engineering psychology conducted by Technische Universität Berlin and Humboldt-Universität zu Berlin investigated the extent of the use of human-like features in robots. The study shows that human characteristics do not always have beneficial impacts.

The psychologists evaluated thousands of scientific articles for their meta-analysis, using dozens of studies with around 6000 test subjects to research under what circumstances anthropomorphic design can be effective or not. The results were published in the latest issue of Science Robotics and suggest that the context plays a relevant role in human-robot interaction.

Eileen Roesler from TU Berlin holds an M.Sc. in psychology – specifically in human performance in socio-technical systems – and is the lead of the study. “We noticed a positive effect for perception, but not for perceived safety. A robot doesn’t become safer just because it looks more human.”

Anthropomorphic design features do not only refer to appearance. They also include features that involve communication, movement and definition.

Tasks and circumstances are the main elements to take into account when studying human-robot interactions. Despite a widely-held belief– and previous research – suggesting that anthropomorphization does lead to positive effects, the picture is more nuanced. Having robots with human features does not necessarily improve the experience with them, especially when it comes to performing tasks with them.

“Whereas we found a consequent positive effect in the social domain, we do not find any in the service domain, and just a few in the industrial domain,” Roesler explains.

Positives

Within social interaction, anthropomorphism is important, as sociability is uniquely human. The German researchers also conducted experiments with people that had never interacted with robots before. “The aim was to stimulate them cognitively or emotionally. It is really sensible to make robots move or behave like humans because we are able to master personal interactions. Individuals who have no idea on how to interact with new technologies, still know how to interact with humans”, says Roesler.

Therefore, anthropomorphisms in robots have positive effects in social sectors, such as care. Interaction is capable of becoming engaging, as the social aspect is at it’s core.

Negatives

Despite the positive effects of social interaction, it is easy to cross the line and make the relation with ‘cyborgs’ uncomfortable. Roesler clarifies: “It is a positive thing to make robots more human-like to a slight degree. When robots are perfect human replicas, they cause what is known as the uncanny valley effect, as human beings then experience them as creepy.”

When robots and humans have to fulfill a task together, things change. An example is the industrial domain. For instance, embedding eyes that look around randomly affects trust and perceived reliability. In this field of application, human features make less sense at the moment.

According to Roesler: “Humans perceive industrial robots as perfect automations. We know they are super precise, whereas we, as humans, are prone to make mistakes. When robots are designed in a more human-like manner, we expect them to fail, because humans fail.” Such perfection may be perceived as quite overwhelming.

Moreover, adding human characteristics to robots raises concerns about the very nature of the relationship we have with them at work. Is it a tool, a partner, or somebody stealing my job? Adding arbitrary features to cyborgs in this field of application can be counterproductive.

Expectations

Roesler points out that – in the relationship between humans and robots – it is all about the conforming to expectations. “When I see a robot with the eyes and a mouth, I expect it to see me and to talk to me. If I design a robot to look like a tool, I do not expect it to talk to me, it would be creepy and feel uncanny. It is really important to people that design meets the expectations.”

With regard to this aspect, the researcher clarifies how the functionalities should be at the core when it comes to developing robots. As Roesler also says, design should be the key to make robots and their functionalities more intuitive.

Less perfection

Different options may help in developing better anthropomorphized robots, bridging the gap with humans. For instance, letting them fail more often would help in increasing trust.

In some cases, a good idea would be a restriction in functionalities. “Let’s imagine a robot operating in a hospital that delivers medications. It doesn’t have a mechanical arm, so it can’t access the elevator. Design on an anthropomorphic level could be used, by giving it a nice human voice to ask bystanders to help it out”, says Roesler. This way, robots would be stimulating contact with people in a more natural way.

“Robots are not caged up anymore, they are interacting with us in our world. Making them behave as we do might not be a bad idea, as we would then be able to predict their behavior more easily”, Roesler goes on to explain.

Robots and society

Aside form all the comments on design and functionality that can be made, more needs to be discussed, according to Roesler. “I think that we, as a society, should think whether we want machines that stimulate human-like features. Do we want robots that recreate human characteristics, such as empathy, for example?”

Expectations, functionality, and integration in society. It is about reflecting on the role we want machines to have in tomorrow’s world. Figuring out how much of our nature we want to embed into them will be crucial to understanding how influential we want them to be in our lives.

Read Science Robotics article here: A meta-analysis on the effectiveness of anthropomorphism in human-robot interaction

Also interesting: A robot as a colleague? – No technological innovation without social innovation