Only a universal law on rules of conduct applicable to robots and the use of artificial intelligence can protect humankind against their brutal abuse, so said director Carlo van de Weijer of the Eindhoven AI Systems Institute (EAISI) during last week’s High Tech Next information day. But it remains to be seen how a law like this will get off the ground.

The logical thing to do is for the United Nations to make such a law. That is the only global governmental body that we have and who can do something like that. “It is also on the agenda there. However, not every country is a member of the United Nations,” said Van de Weijer. So how can this problem be solved? ” No one really knows. ”

If such a universal law for AI were to come into being, it would be a “gigantic pile of paper “, according to Van de Weijer. “As big as a bible.”

Three rules for robots

In the past, three rules were drawn up by futurologist and science fiction writer Isaac Asimov which are still valid nowadays in science. The first is that a robot must not inflict any harm on people, and must prevent people from being harmed. The second rule is that the robot must obey a human being, unless this brings it into conflict with the first rule. The third rule is that the robot must be able to protect itself, except when this conflicts with the first and second rule.

Although these rules are very clear, they are no longer respected by everyone. “In my opinion, we have already gone beyond that point,” Van de Weijer states.

Drones that shoot

To illustrate to the public what can happen when people take advantage of AI, Van de Weijer used You Tube videos. For instance, drones that use facial recognition to kill a person. The clip was fictional, but nonetheless it was not something to be thrilled about.

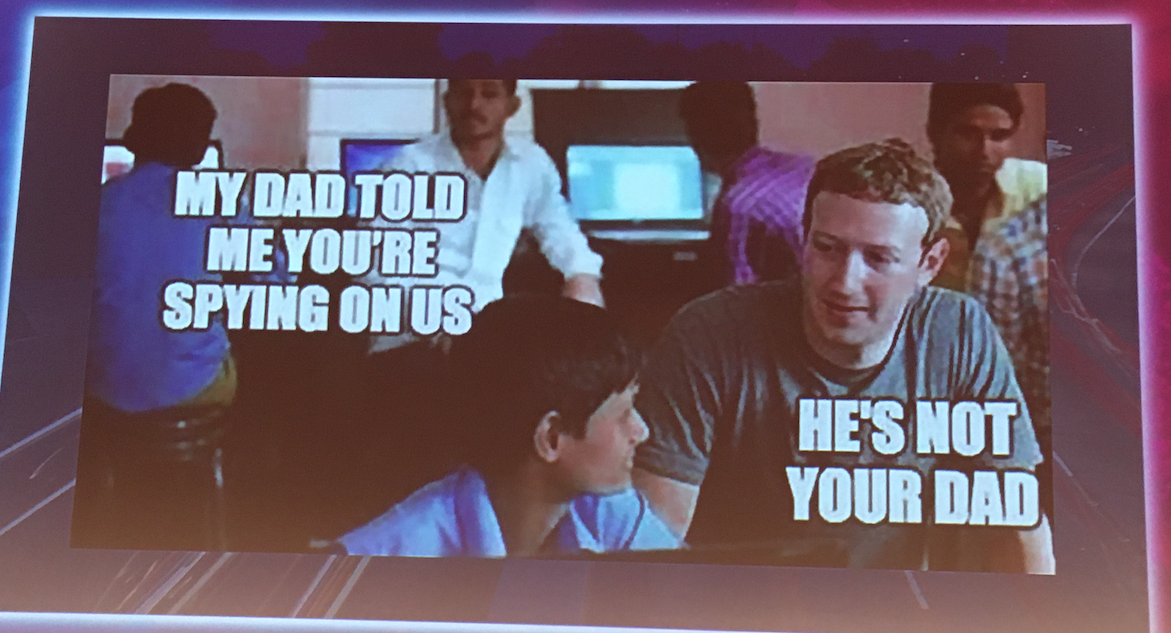

He showed in another video how people could benefit from a self-driving car equipped with cameras which continuously film what is happening in the surrounding area. Imagine if Google were to become the owner of 1% of these types of cars in the future, then they would have an enormous foothold in the information market, Van de Weijer said.

Read more Innovation Origins columns by Carlo van de Weijer here.

That’s because of all the data the cars collect on the road and at their parking lots. These are worth a lot of money and they are able to sell that.

Selling privacy to a car manufacturer

The disadvantage of this is that passengers and motorists lose their privacy this way. This also applies to people who are in the vicinity of the car. They are also being filmed without their permission and probably have no idea that it’s happening.

Up until now, this type of car has not been on the market for consumers. But it can’t be ruled out that they will be introduced in the future either. In theory, a model is conceivable whereby consumers can drive a car ‘for free’ in exchange for handing over their data, said Van de Weijer. Free in “quotes” because it’s not really free. After all, the consumers who accept an offer of this kind are selling their privacy.

Who will guard the robots?

Van de Weijer foresees a leading role for the AI industry in Eindhoven in the field of people-friendly AI and robotics. There is not just a great need for this. There’s plenty of scope for it as well.

Superpowers China and the US are indeed investing a lot of capital in the development of new AI, Van de Weijer said. But how this AI is utilized, is determined top-down by the Chinese government. They’re not in the habit of making rules that benefit the rest of the world. In the US, the market – e.g. Google and Facebook – determines how AI is used. These companies are generally keen to capitalize on consumer data. This means that their interests are not always safeguarded from misuse.

In Eindhoven, rules for the responsible use of AI might be devised that could serve as an example for the rest of the world.